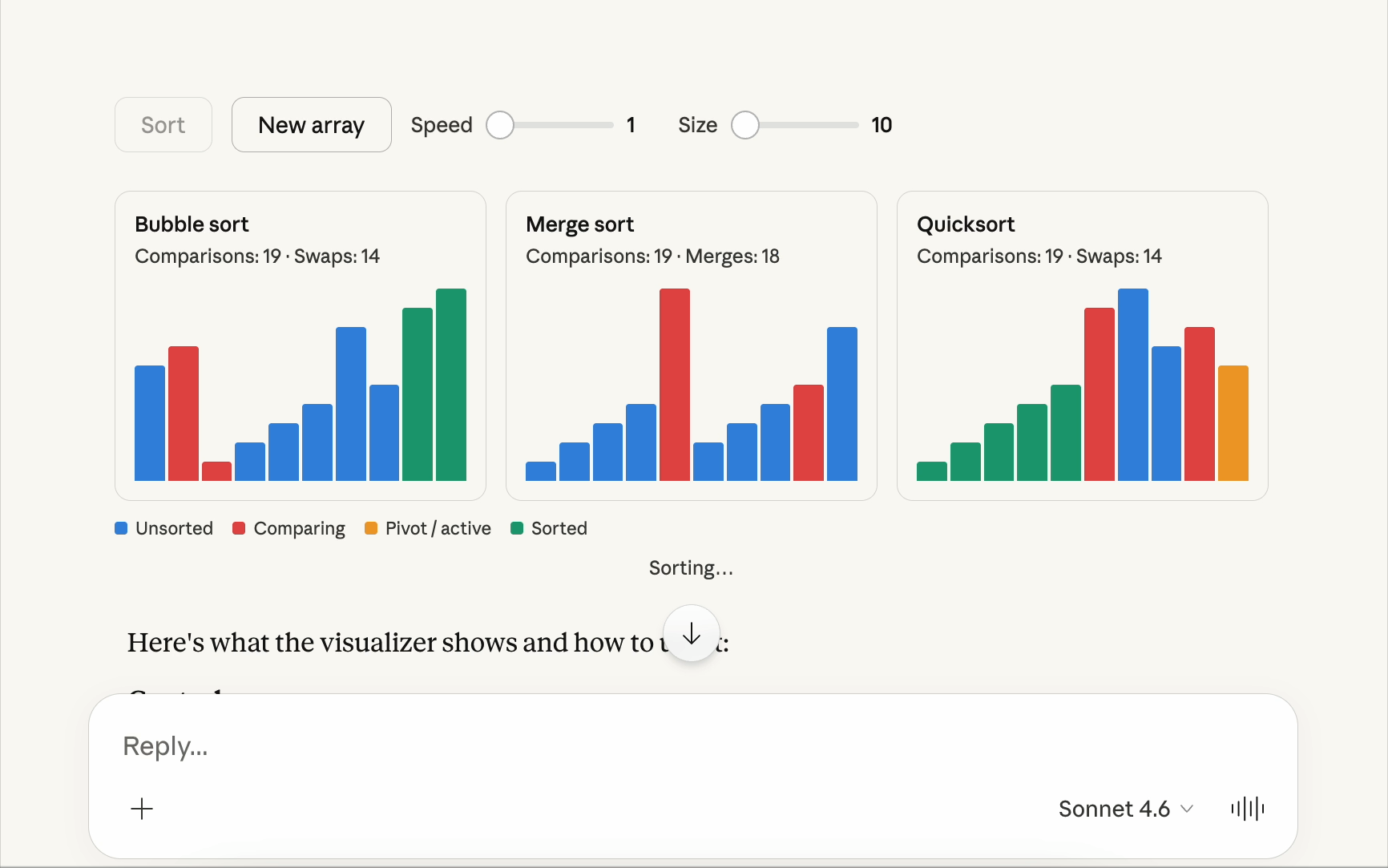

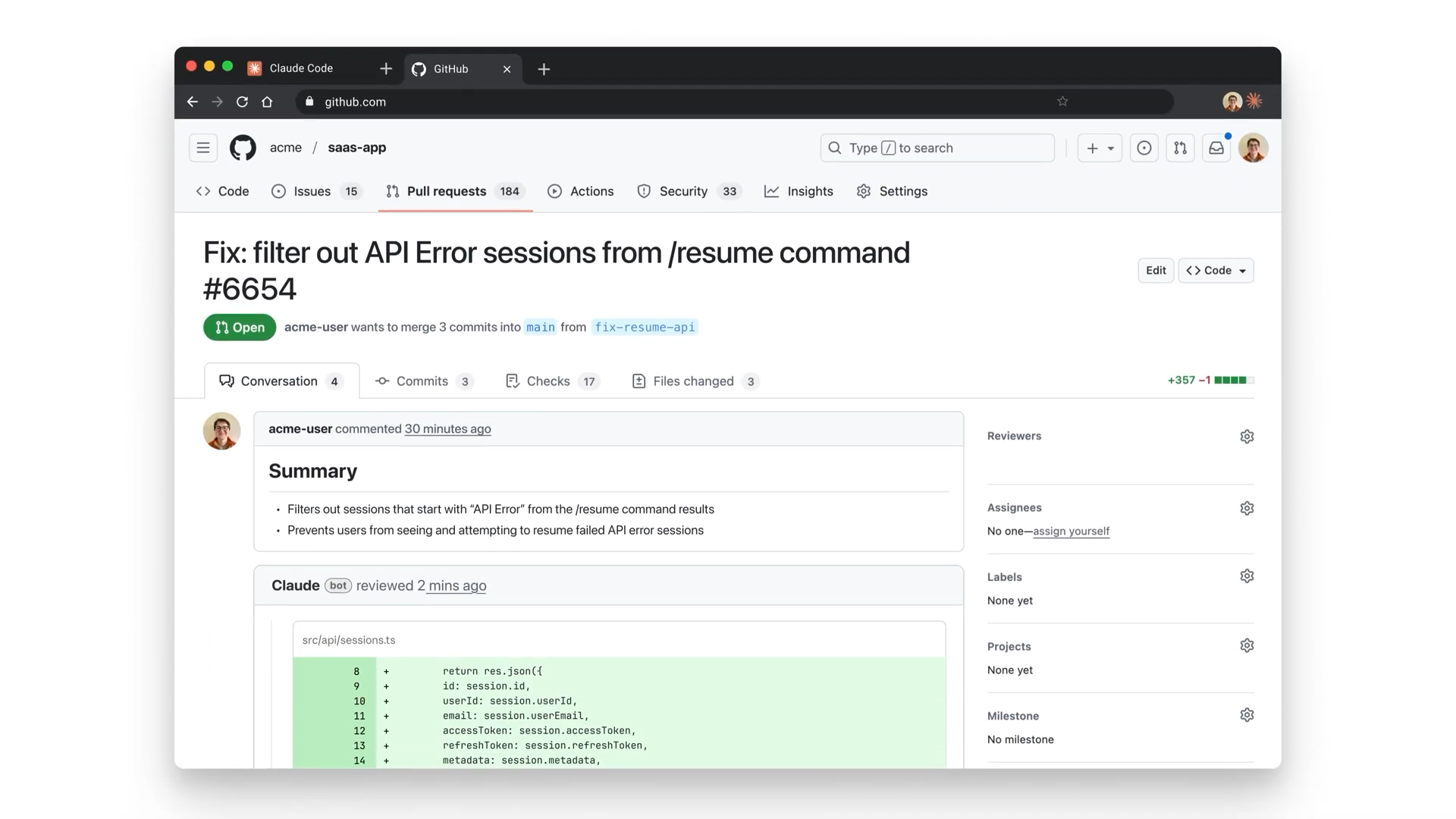

Claude Code’s new “buddy” feature looks like a joke — but it’s quietly testing something big

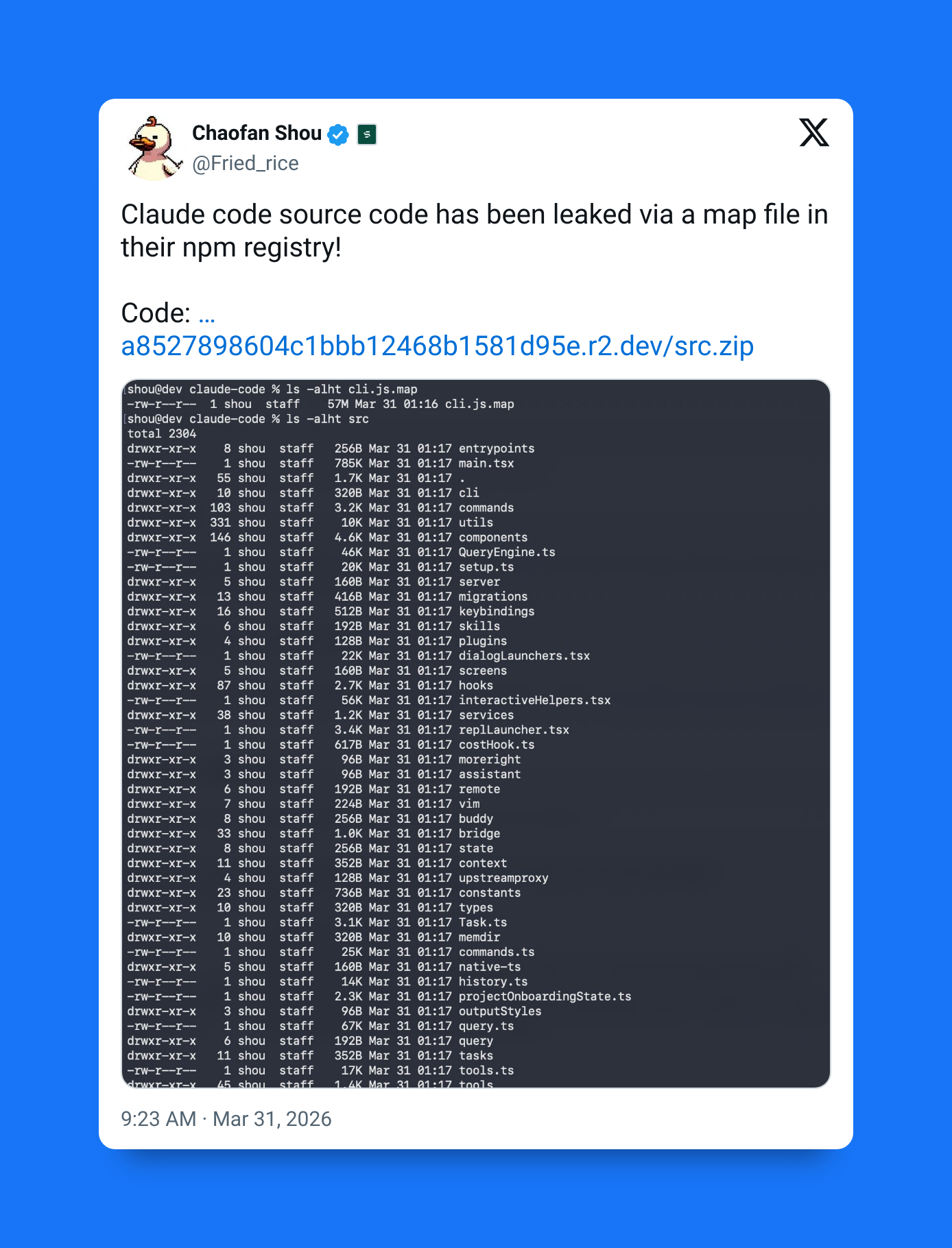

This was definitely one of the most fascinating features that slipped out with the massive Claude Code source code leak.

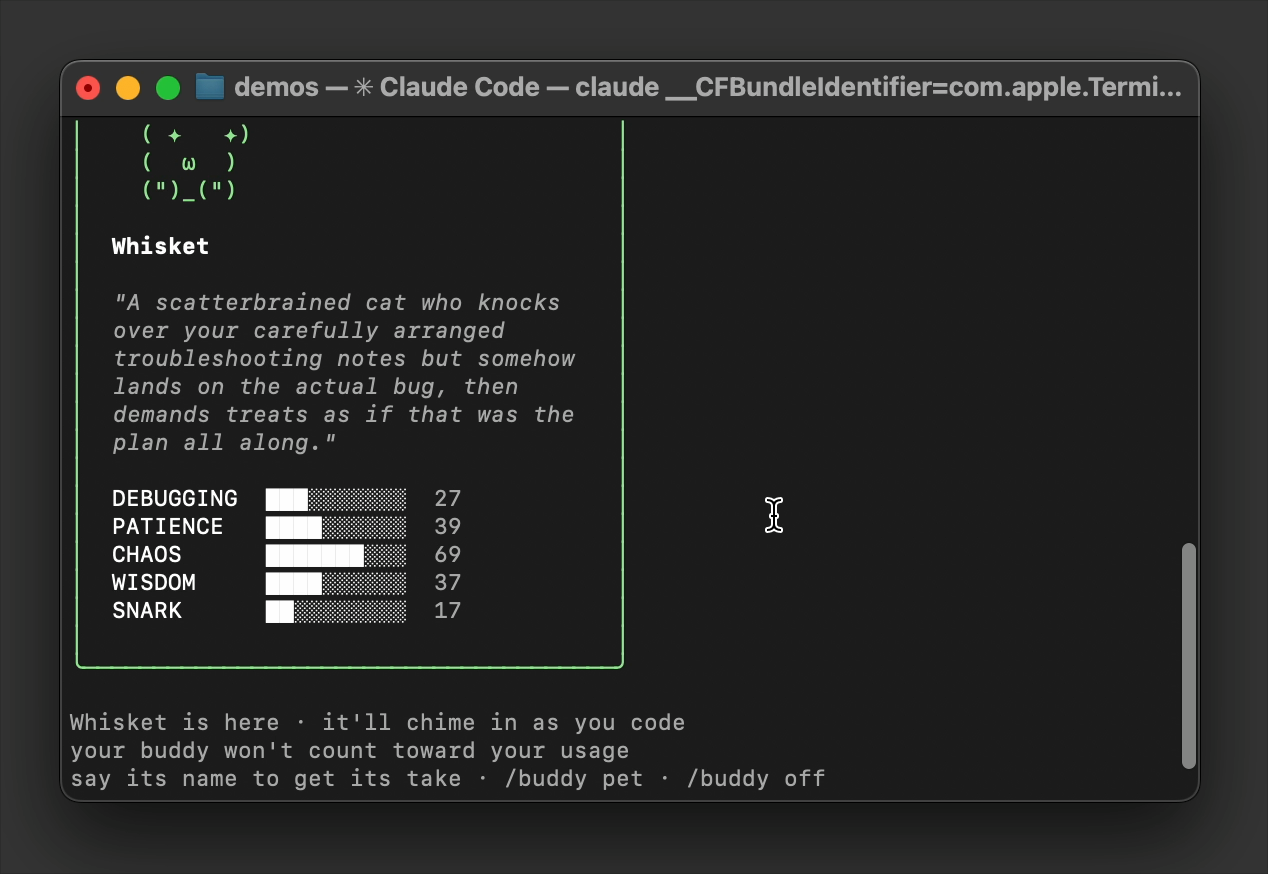

Type /buddy in Claude Code — and you hatch a tiny ASCII creature that sits beside your prompt while you code. It’s only about five lines tall.

Hatching my so-called buddy in Claude Code:

You’ll see that it’s definitely in a different class from most of the other Claude Code commands…

Petting my so-called buddy in Claude Code… uhmm… okay?

It doesn’t help you write functions. It doesn’t debug your stack traces.

It just… watches.

Many people were saying it was supposed to be some sort of April Fools gimmick…

But the deeper design is revealing something more deliberate that most people aren’t paying attention to:

Claude Code is experimenting with personalization, emotional UX, and proactive AI behavior — disguised as a cute terminal pet.

1. Uniquely yours

When you hatch your buddy, Claude generates:

- A unique name

- A permanent personality description

- Deterministic stats tied to your user identity

That means your buddy isn’t just random fluff. It’s unique to you.

Not just cosmetically. Behaviorally.

This kind of identity-based personalization is rare in developer tools. And intentional. The moment users feel something is “theirs”, attachment forms — even if the feature is technically trivial.

2. 18 species, rarity tiers, and “shinies”

Claude didn’t stop at a single mascot.

Your buddy can be one of 18 different species, including:

- Capybara

- Axolotl

- Ghost

- Mushroom

- (and more)

There’s also a full rarity system:

- Common — 60%

- Higher rarity tiers in between

- Legendary — 1%

And then there’s the extra layer:

- Shiny variant chance — 1%

Different colors. Same species. Much rarer.

This is straight out of collectible game design. And it works. Users compare. Share. React. Suddenly a terminal pet becomes social.

3. Five personality stats

Every buddy rolls five deterministic stats:

- Debugging

- Patience

- Chaos

- Wisdom

- Snark

These stats shape how it comments on your workflow.

A high-snark buddy might tease your bugs.

A high-patience buddy might encourage you.

A high-chaos buddy might… not be helpful at all.

It’s lightweight. But it makes the companion feel alive.

4. It actually sits in your workflow

The buddy appears as:

- A small ASCII figure

- Roughly 5 lines tall

- Positioned next to your prompt

- Always visible unless hidden

No panel. No window. No popup.

Just a quiet presence.

5. It occasionally talks

If unmuted, the buddy will sometimes:

- Comment on your code

- React to errors

- Tease procrastination

- Offer small observations

These show up as speech bubbles.

Short. Infrequent. Character-driven.

This is important.

Because the AI isn’t just responding anymore — it’s initiating.

6. A separate “watcher” entity

Claude is reportedly instructed to treat the buddy as a separate watcher.

That means:

- You can talk to the buddy directly

- It has its own tone

- It doesn’t replace the main assistant

- It behaves like a side character

This avoids mixing personalities. Claude stays serious — the buddy stays playful.

Clean separation and UX.

The command set

If you have Pro and the latest Claude Code, you can use:

/buddy— Hatch or show your companion/buddy card— View stats, rarity, personality/buddy pet— Small interaction with heart animations/buddy off— Hide the companion/buddy mute— Silence commentary

These small rituals matter. They make the feature feel real.

7. Why this actually matters

Don’t think this is just a cute pet. It’s testing two big ideas.

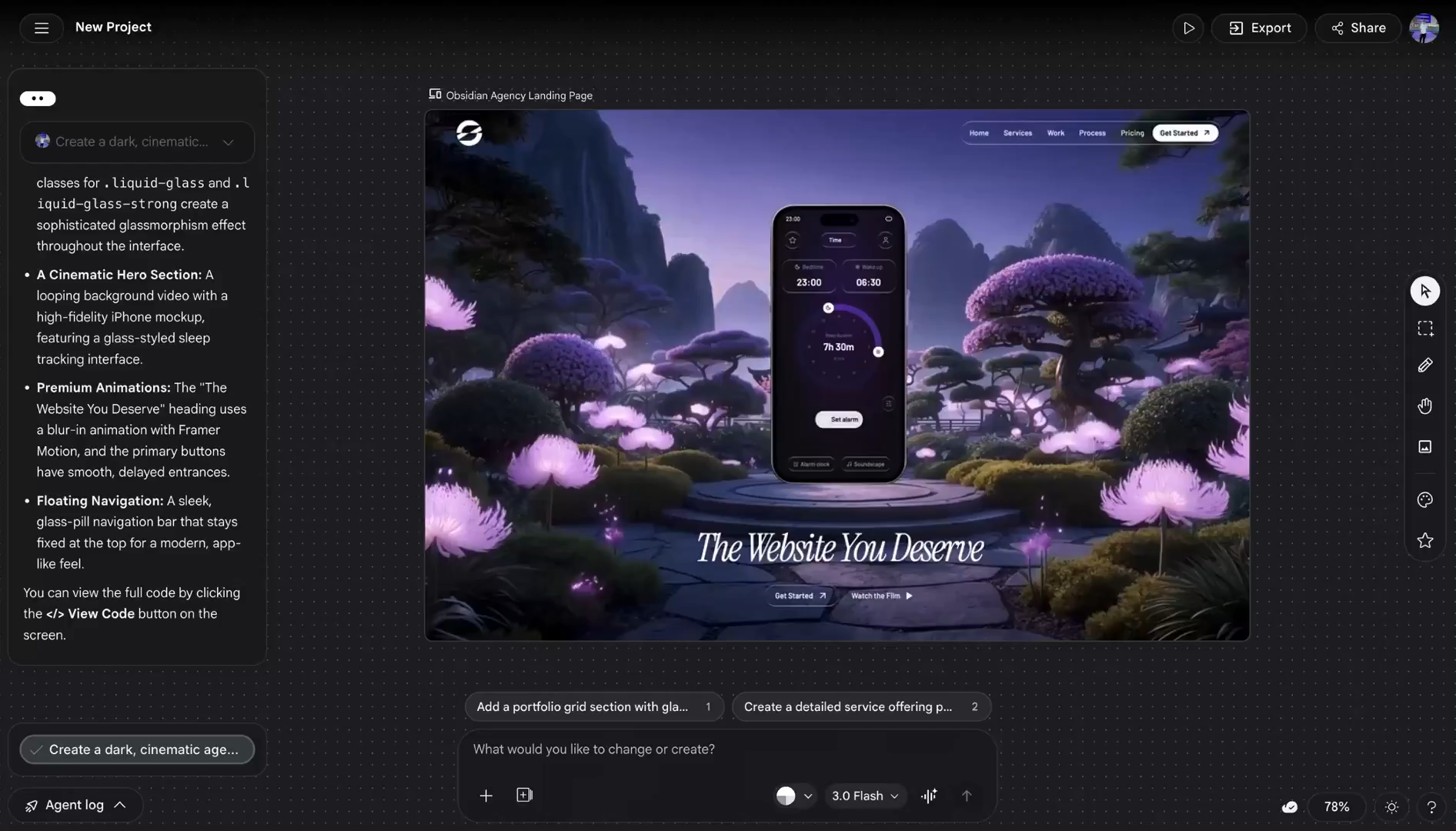

1. Personality can beat raw intelligence

AI tools are starting to compete on feel, not just capability.

A colder tool may be objectively better.

But users often prefer the one that feels alive.

We’ve seen this before — when more emotional models like GPT-4o were initially replaced by GPT-5, many users reacted negatively despite the noticeable capability gains. Personality creates attachment.

Buddy leans into that.

2. The real deal — testing proactive AI safely

Anthropic is actually secretly trying to solve a difficult problem:

When should AI interrupt?

- Too often → annoying

- Never → passive

- Somewhere in between → useful

Buddy is a clever workaround.

If a popup interrupts you → frustrating.

If a tiny pet interrupts you → charming.

Same behavior. Different perception.

This makes Buddy a Trojan horse for proactive UX testing.

And it goes deeper than just commentary and recommendations.

From what we saw in the Claude Code leak, it looks as if Buddy could act as a permission layer for more proactive systems like KAIROS.

Instead of a sterile confirmation dialog, the pet might interrupt with something like:

“I found a way to optimize those 12 functions. Should I go for it?”

That makes high-agency AI behavior feel conversational instead of intrusive.

The two may also share project memory:

- KAIROS records what changed in the code

- Buddy records what’s going on with you and your workflow

These merge into shared context, so the AI wakes up understanding both:

- the codebase state

- the developer’s intent

Anthropic can learn:

- How often users tolerate interruptions

- What tone feels acceptable

- When AI initiative becomes annoying

- When it feels helpful

All inside a low-stakes, playful wrapper.

Small feature. Big signal.

On paper, Buddy does almost nothing.

No coding help.

No automation.

No productivity gain.

But it introduces:

- Unique identity per user

- Rarity and collectibility

- Deterministic personalities

- Ambient AI presence

- Proactive commentary

- Multi-entity assistant design

That’s a lot for a five-line ASCII creature.

Buddy may be tiny. But it hints at something larger:

AI tools aren’t just becoming smarter. They’re becoming active, personalized companions — for better or worse.