Stop writing code comments

Most comments are actually a sign of bad code.

In the vast majority of cases developers use comments to explain terribly written code desperately in need of refactor.

But good code should explain itself. It should tell the full story.

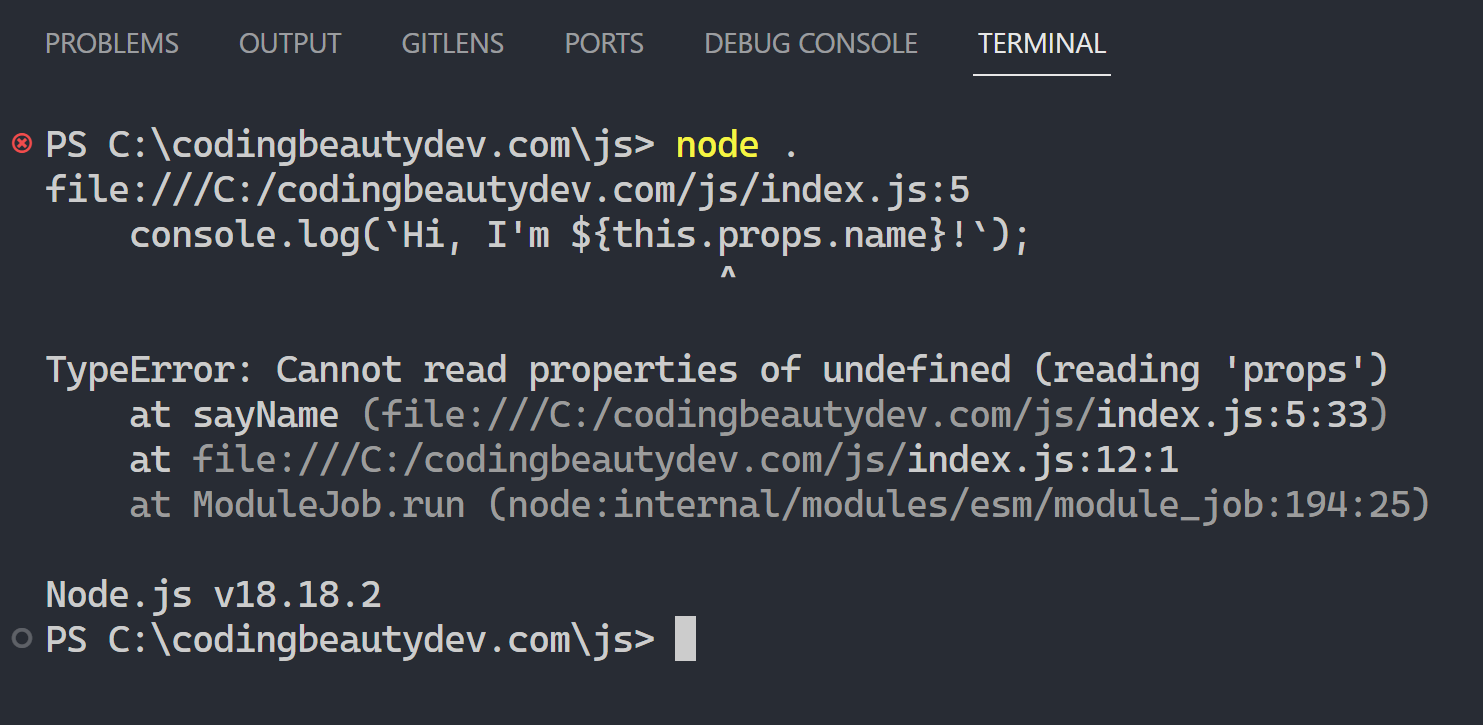

❌ Before:

You did too much in one go and you know it — so you drop in a bad comment to explain yourself:

// Check if user can watch video

if (

!user.isBanned &&

user.pricing === 'premium' &&

user.isSubscribedTo(channel)

) {

console.log('Playing video');

}

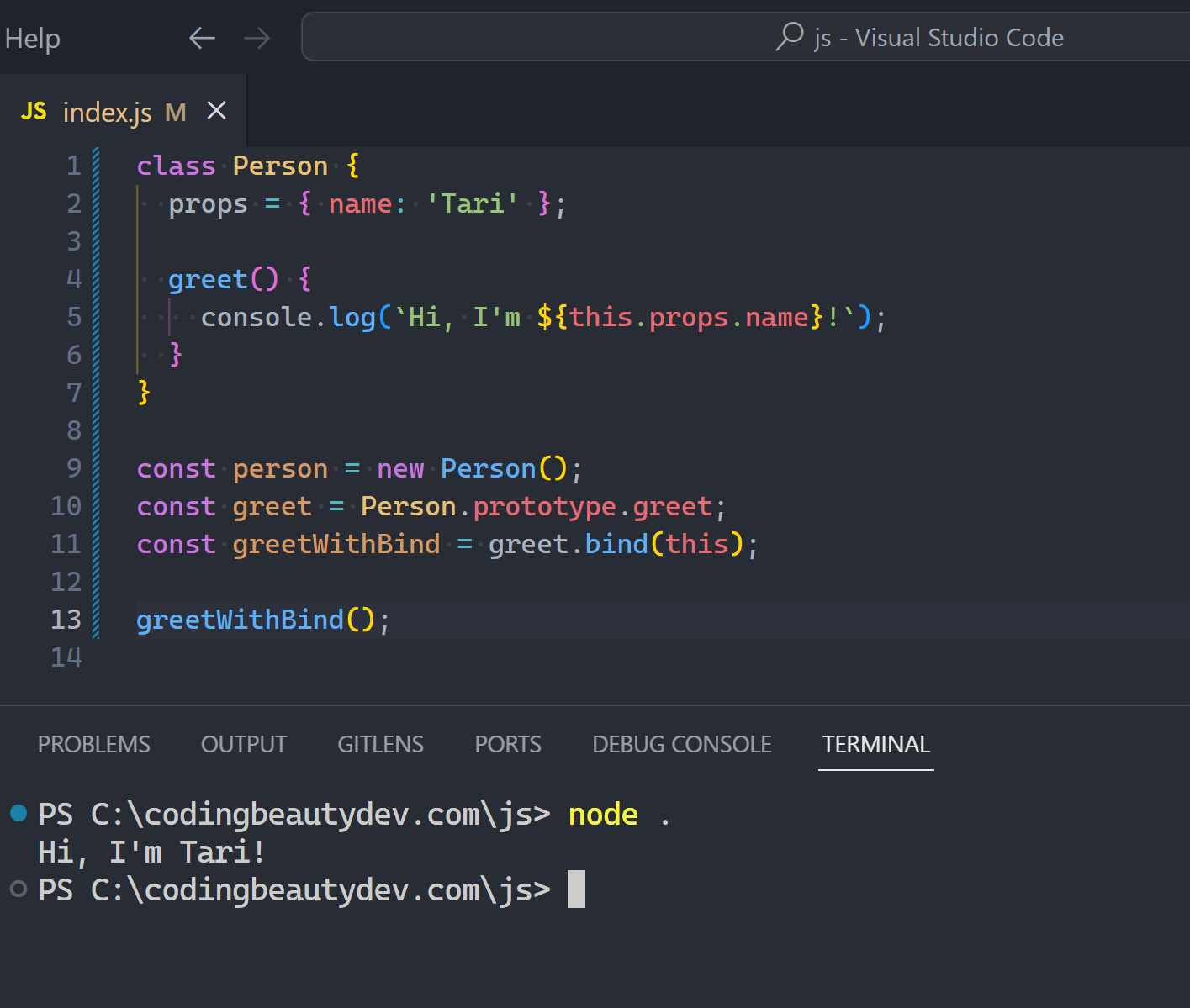

// codingbeautydev.com✅ After:

Now you take things step-by-step, creating a clear and descriptive variable before using it:

Comment gone.

const canUserWatchVideo =

!user.isBanned &&

user.pricing === 'premium' &&

user.isSubscribedTo(channel);

if (canUserWatchVideo) {

console.log('Playing video');

}

// codingbeautydev.comYou see now the variable is here mainly for readability purposes rather than storing data. It’s a cosmetic variable rather than a functional one.

✅ Or even better, you abstract the logic away into a function:

if (canUserWatchVideo(user, channel)) {

console.log('Playing video');

}

function canUserWatchVideo(user, channel) {

return (

!user.isBanned &&

user.pricing === 'premium' &&

user.isSubscribedTo(channel)

);

}

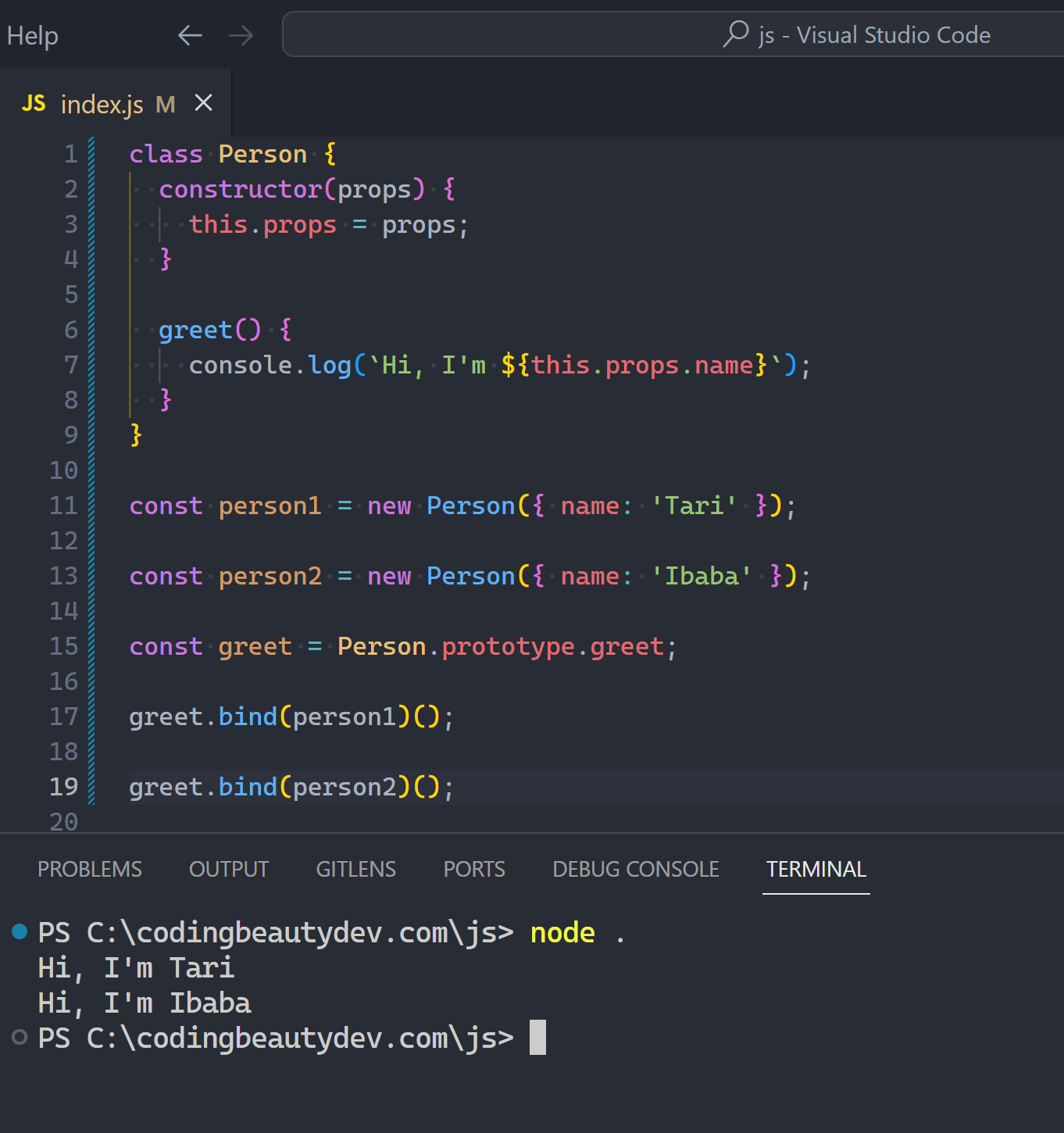

// codingbeautydev.comOr maybe it could have been in the class itself:

if (user.canWatchVideo(channel)) {

console.log('Playing video');

}

class User {

canWatchVideo(channel) {

return (

!this.isBanned &&

this.pricing === 'premium' &&

isSubscribedTo(channel)

);

}

}

// codingbeautydev.comWhichever one you choose, they all have one thing in common: breaking down complex code into descriptive, nameable, self-explanatory steps eradicating the need for comments.

When you write comments you defeat the point of having expressive, high-level languages. There is almost always a better way.

You give yourself something more to think about; you must update the comment whenever you update the code. You must make sure the comment and the code it refers to stay with each other throughout the lifetime of the codebase.

And what happens when you forget to do these? You bring unnecessary confusion to your future self and fellow developers.

Why not just let the code do all the talking? Let code be the single source of truth.

Your var names are terrible

❌ Before: Lazy variable naming so now you’re using comments to cover it up:

// Calculate volume using length, width, and height

function calculate(x, y, z) {

return x * y * z;

}

calculate(10, 20, 30);

// codingbeautydev.com✅ After: Self-explanatory variables:

function calculate(length, width, height) {

return length * width * height;

}

calculate(10, 20, 30);

// codingbeautydev.com✅ Even better, you rename the function too:

function calculateVolume(length, width, height) {

return length * width * height;

}

calculateVolume(10, 20, 30);

// codingbeautydev.com✅ And yet another upgrade: Named arguments

function calculateVolume({ length, width, height }) {

return length * width * height;

}

calculateVolume({ length: 10, width: 20, height: 30 });

// codingbeautydev.comDo you see how we’ve completely exterminated comments?

Like just imagine if the comment was still there:

// Calculate volume using length, width, and height

function calculateVolume({ length, width, height }) {

return length * width * height;

}You can see how pointless this comment is now?

Oh but yet again, we have developers writing tons of redundant comments just like that.

I think beginners are especially prone to this, as they’re still forming the “mental model” needed to intuitively understand raw code.

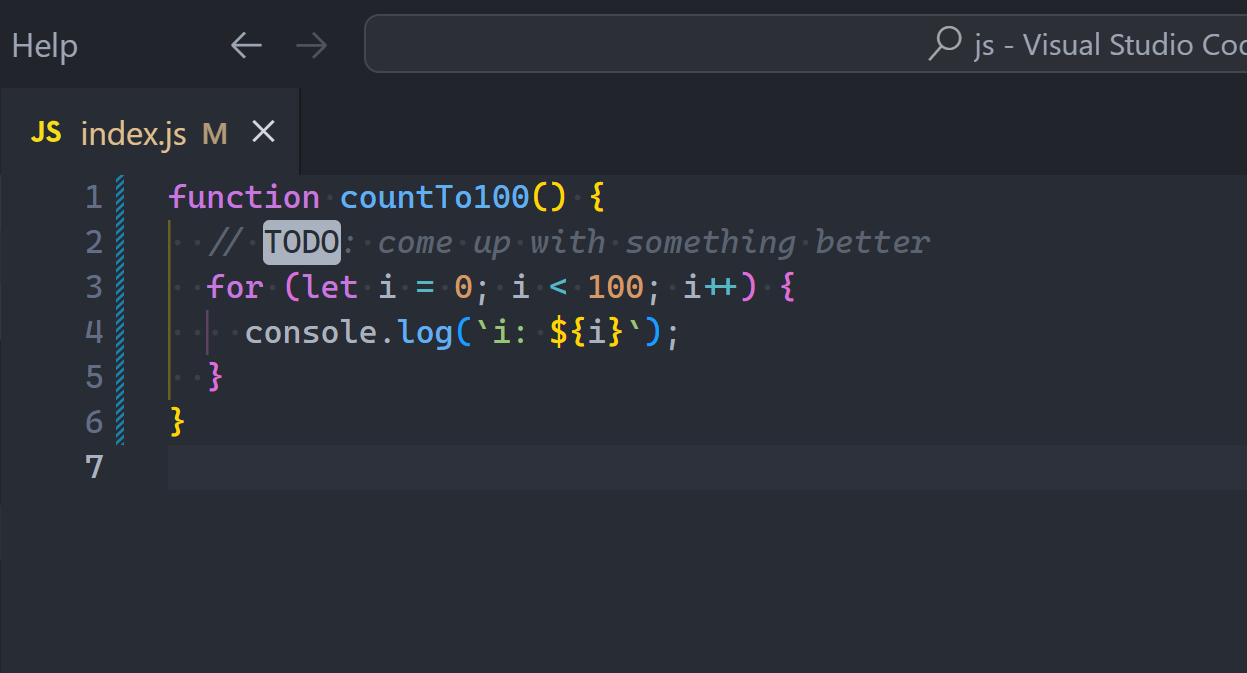

So they comment practically everything, giving us pseudocoded code:

// Initialize num to 1

let num = 1;

// Print value of num to the console

console.log(`num is ${num})`;But after coding for a bit, the redundancy of this becomes clear and laughable, worthy of r/ProgrammerHumor.

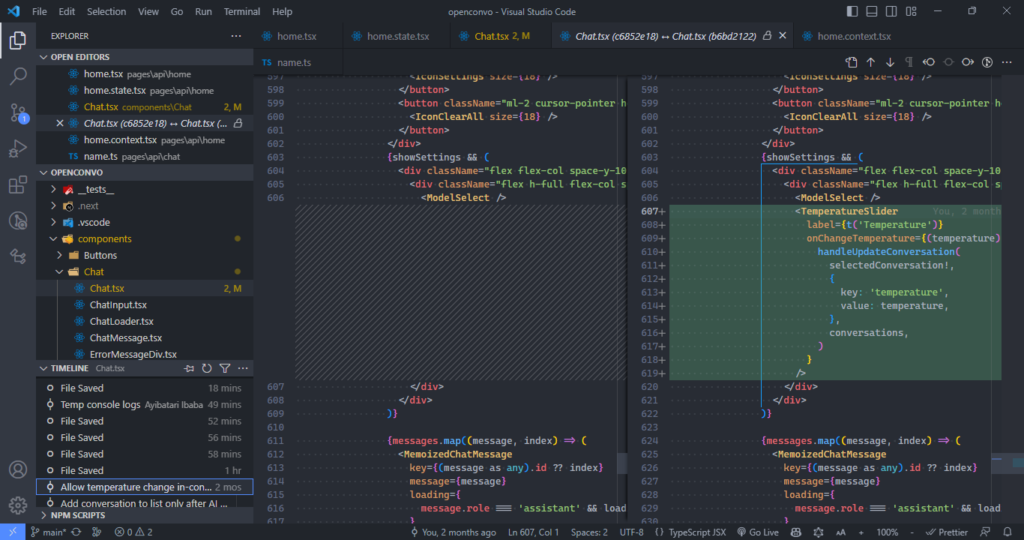

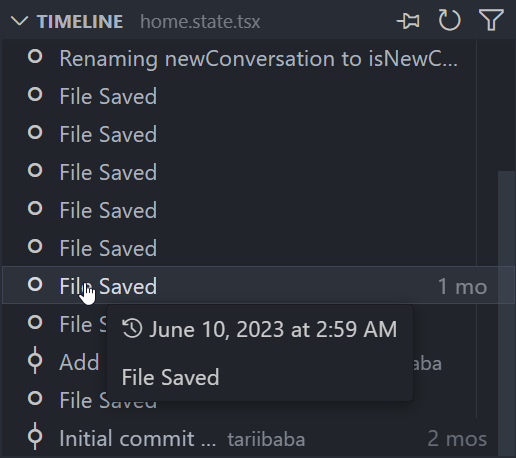

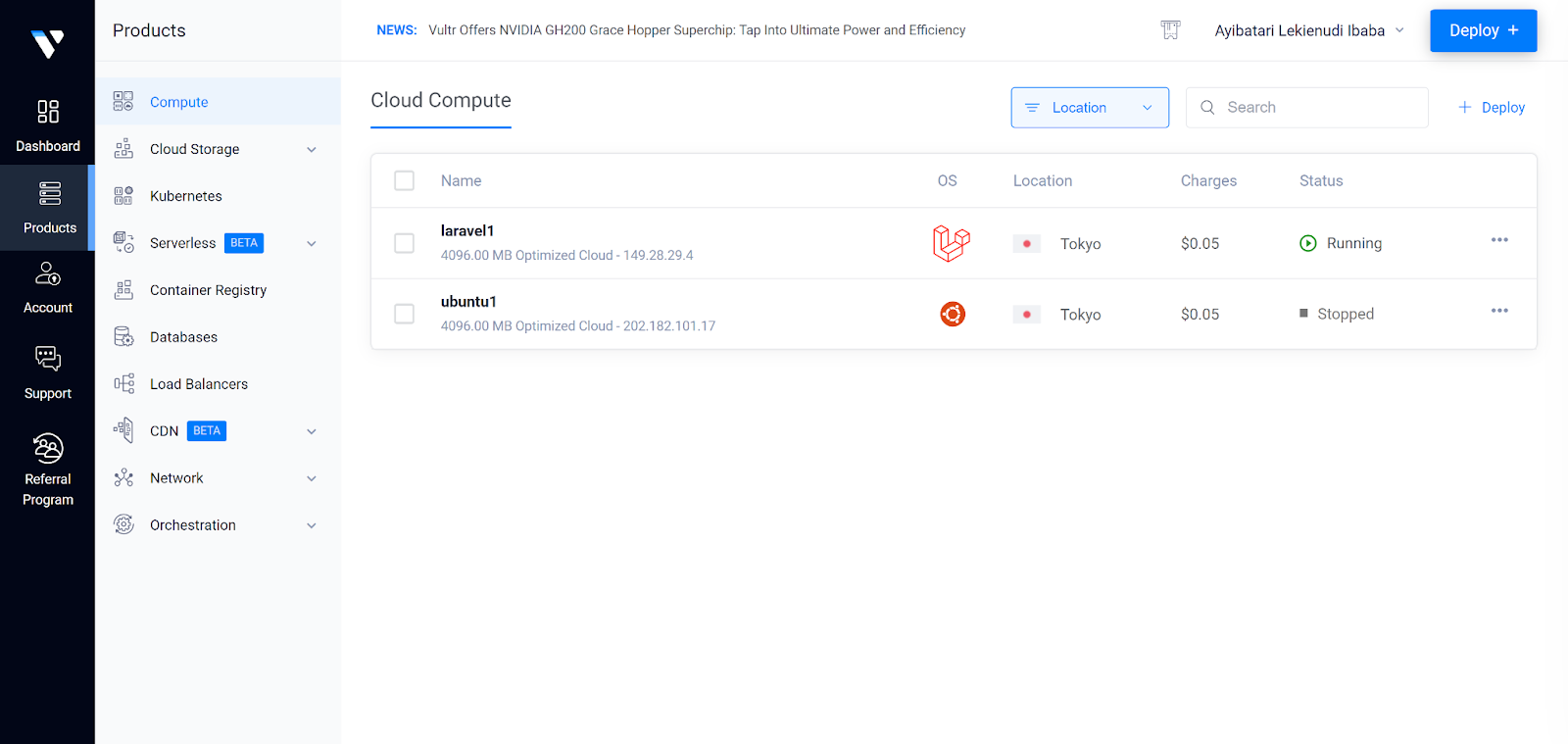

Delete commented out code

They’re ugly and wishy-washy.

You did it because you were scared you’ll need it in the future and have to start all over from scratch.

You should have used Git.

Okay you’re already using Git — well you shouldn’t have written such terrible commit messages. You should have committed regularly.

You knew that if you deleted the code, it’ll be hell to go through your vaguely written commits to find where it last existed in the codebase.

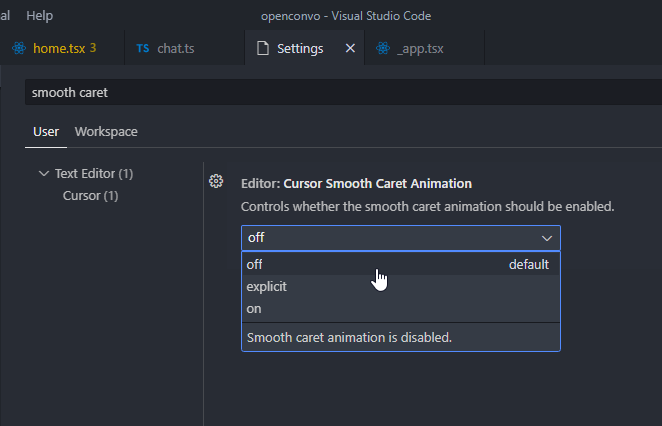

Comments worth writing?

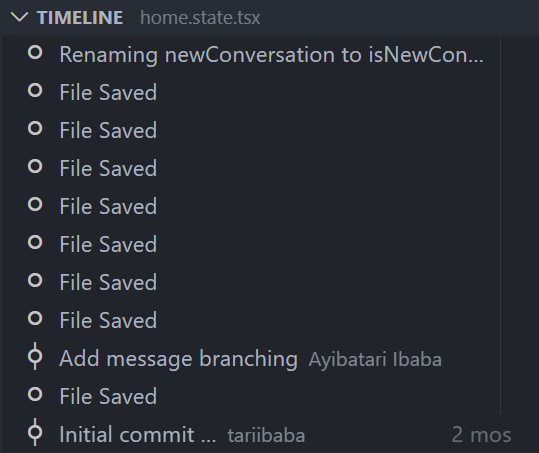

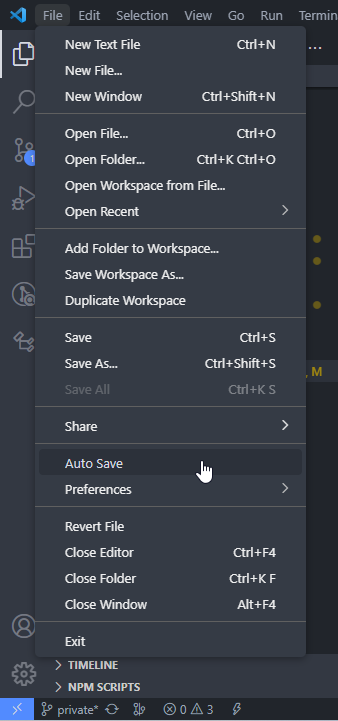

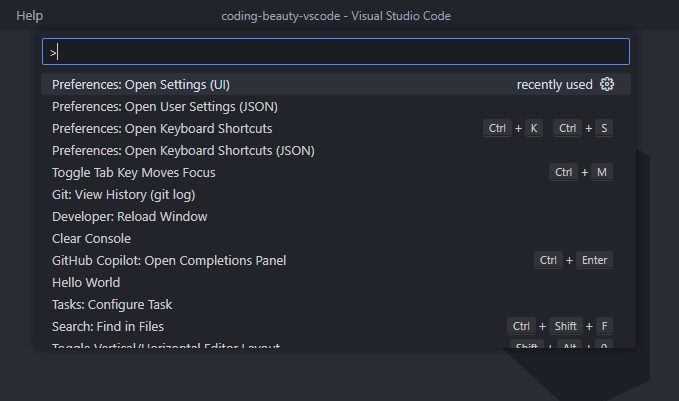

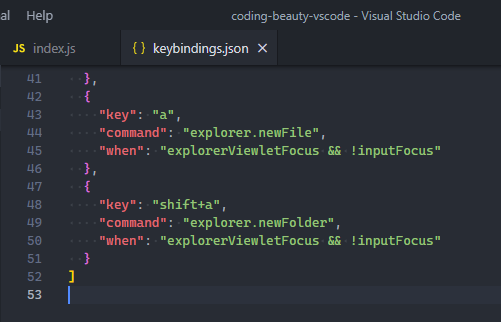

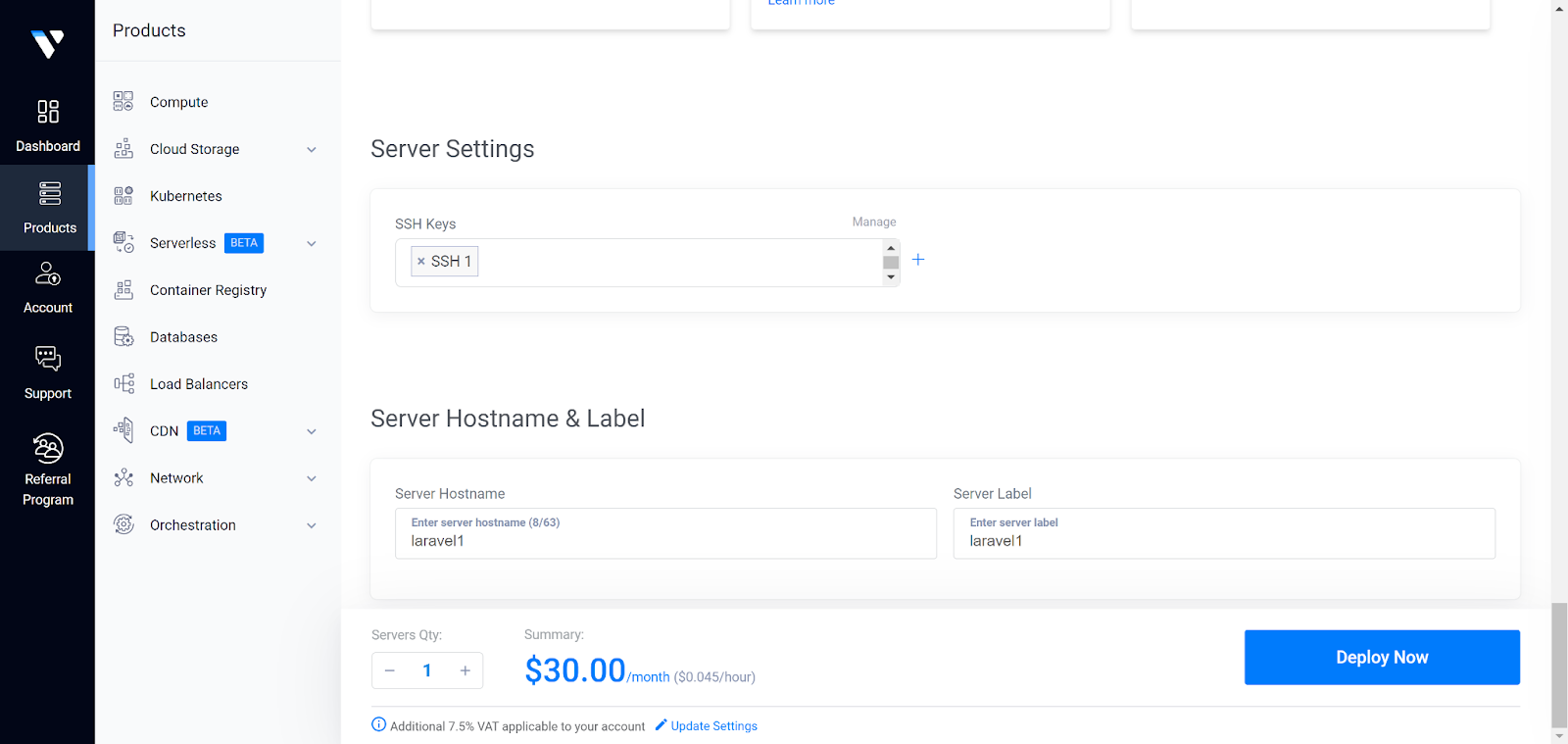

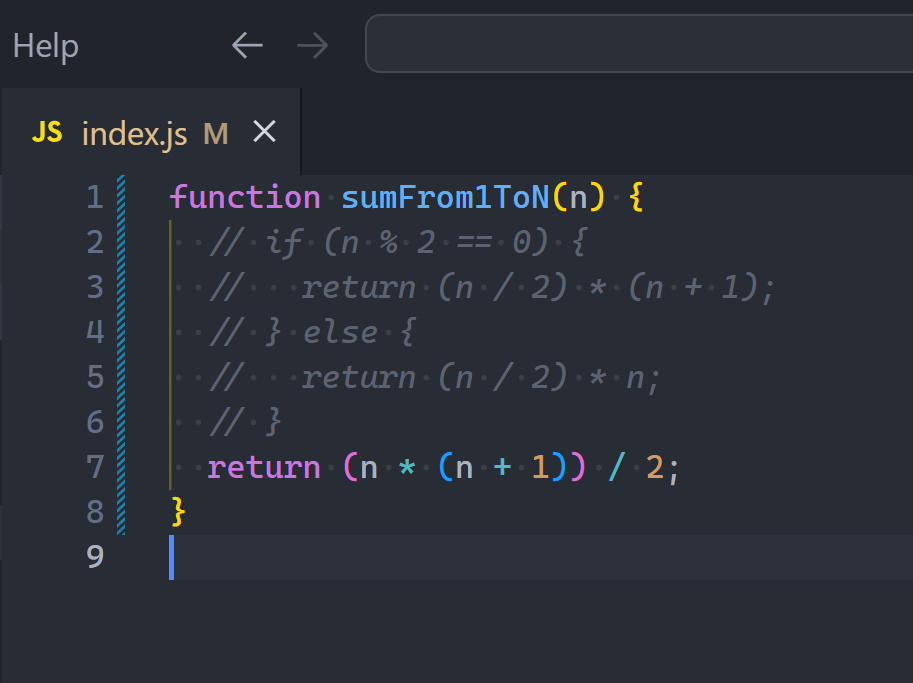

1. TODO comments

Perfect for code tasks in highly specific part of the codebase.

Instead of creating a project task saying: “come up with something better than the for loop in counter/index.js line 2”

You just add a TODO comment there — all the context is already there

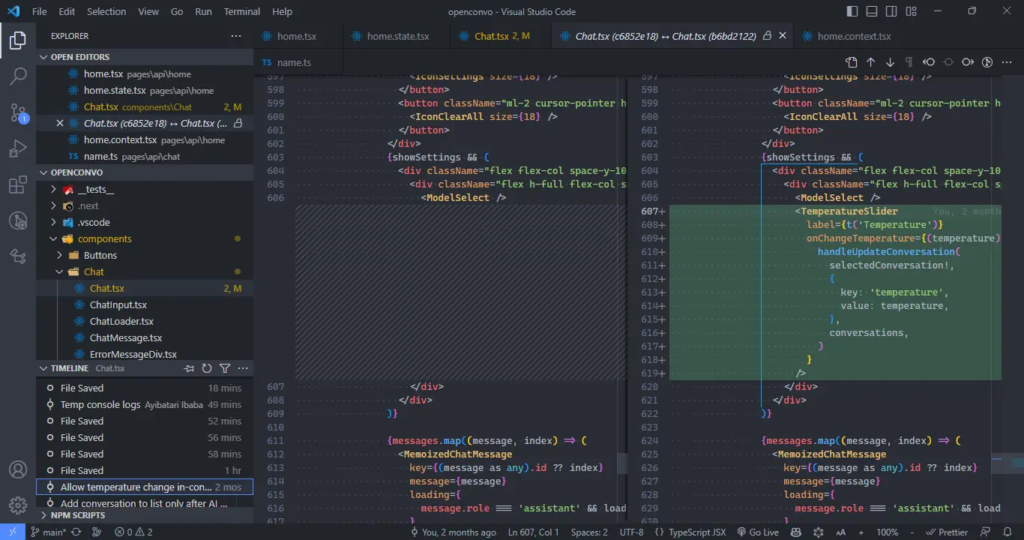

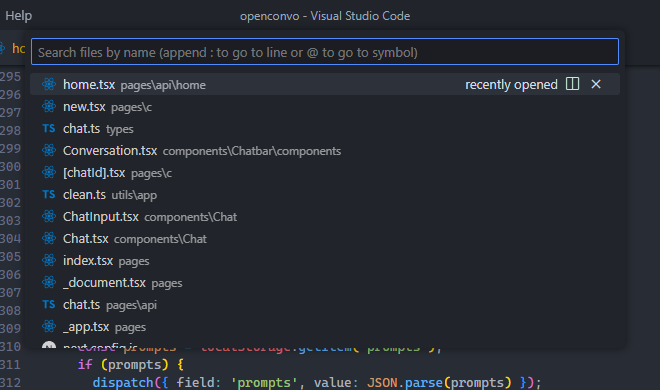

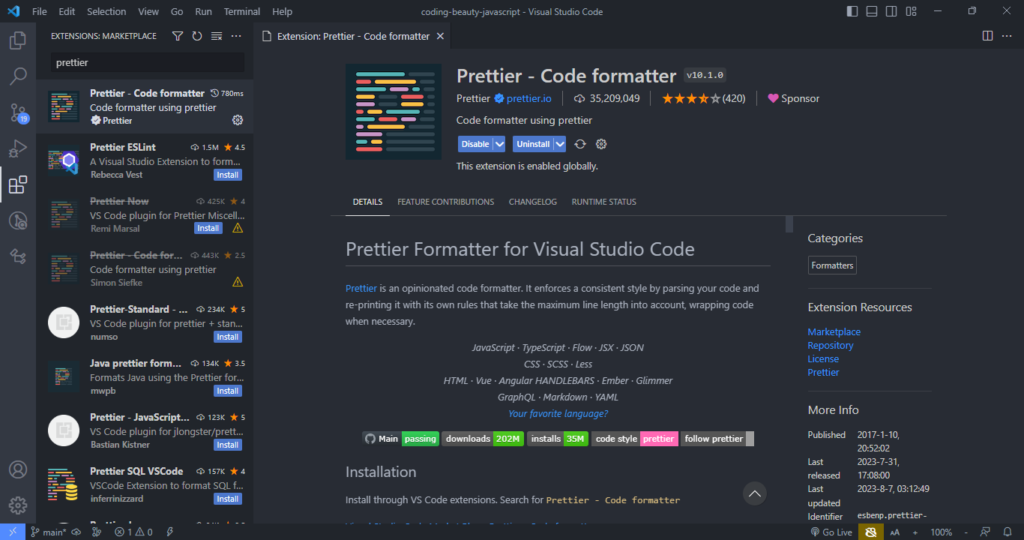

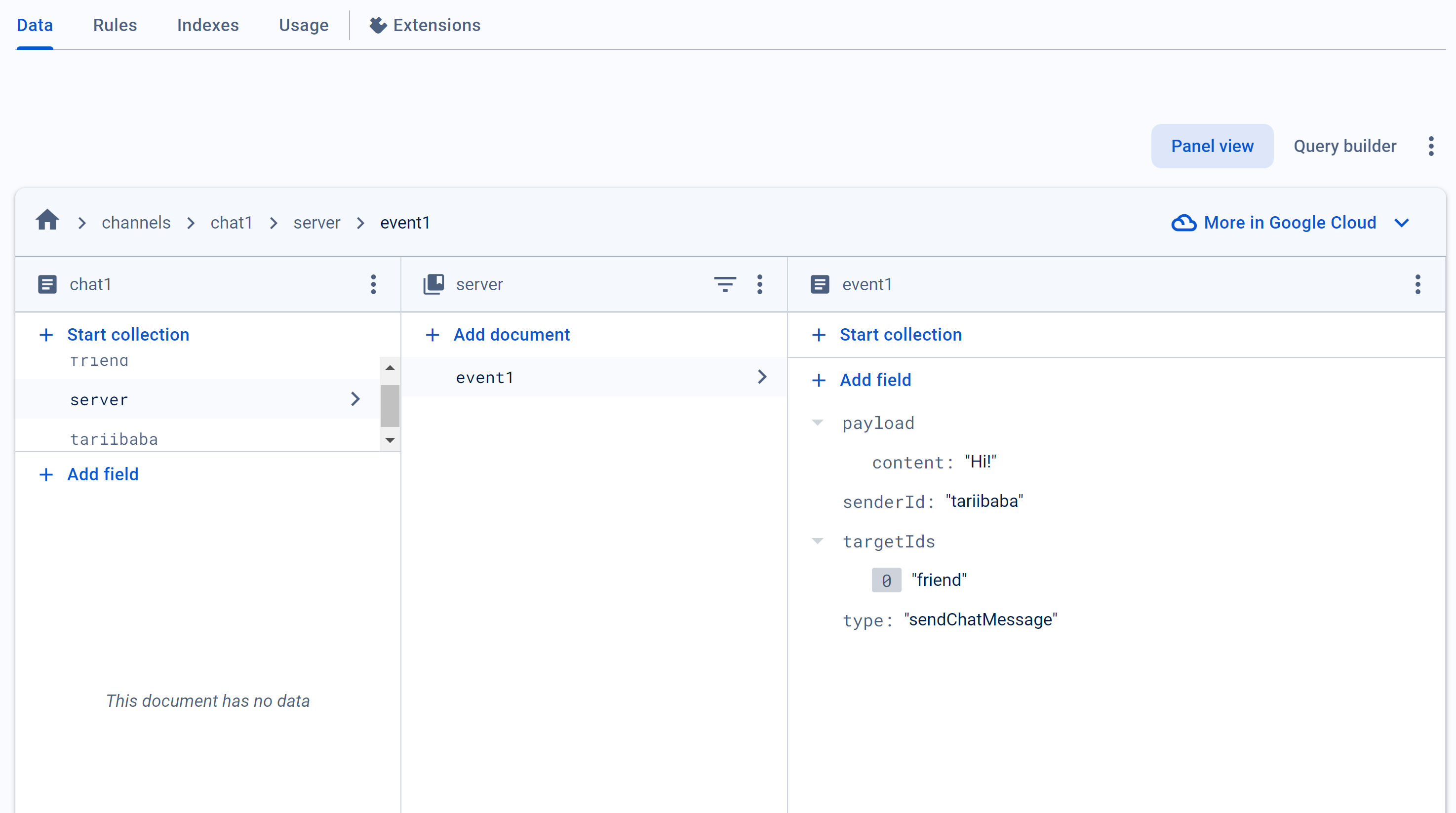

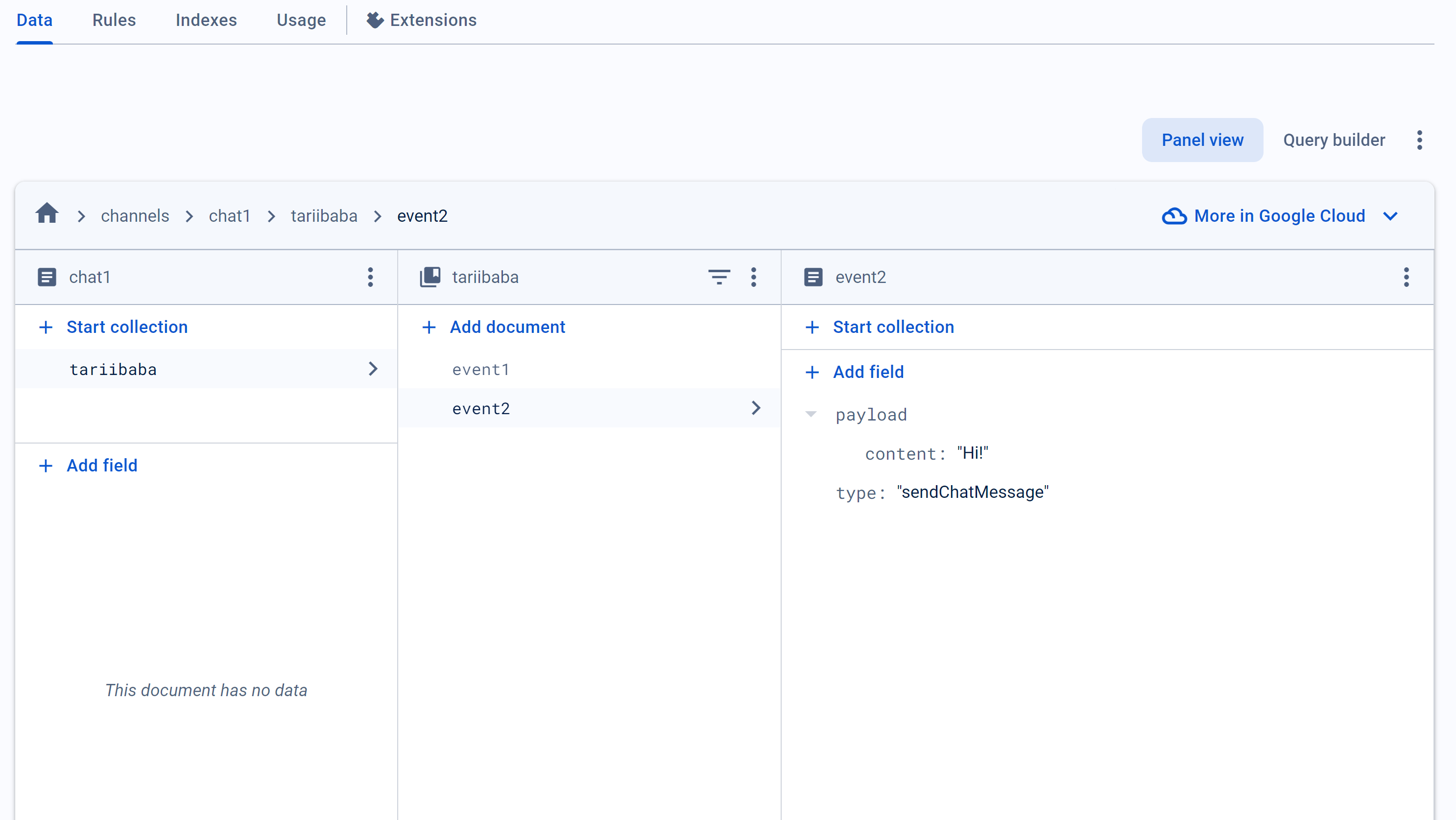

2. Public APIs

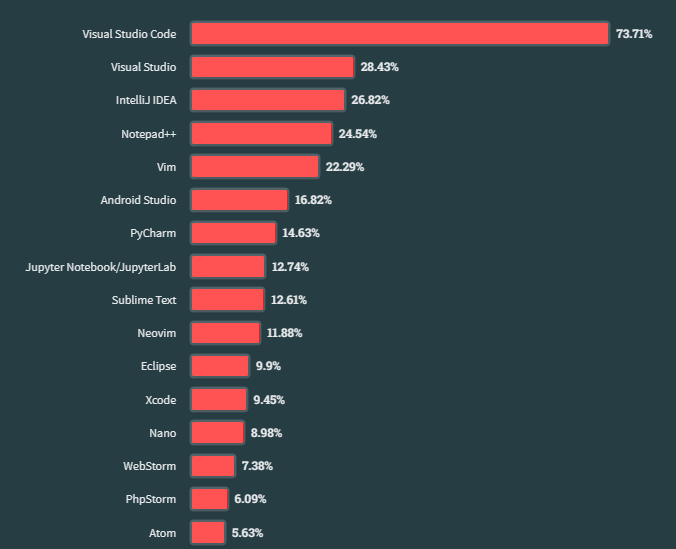

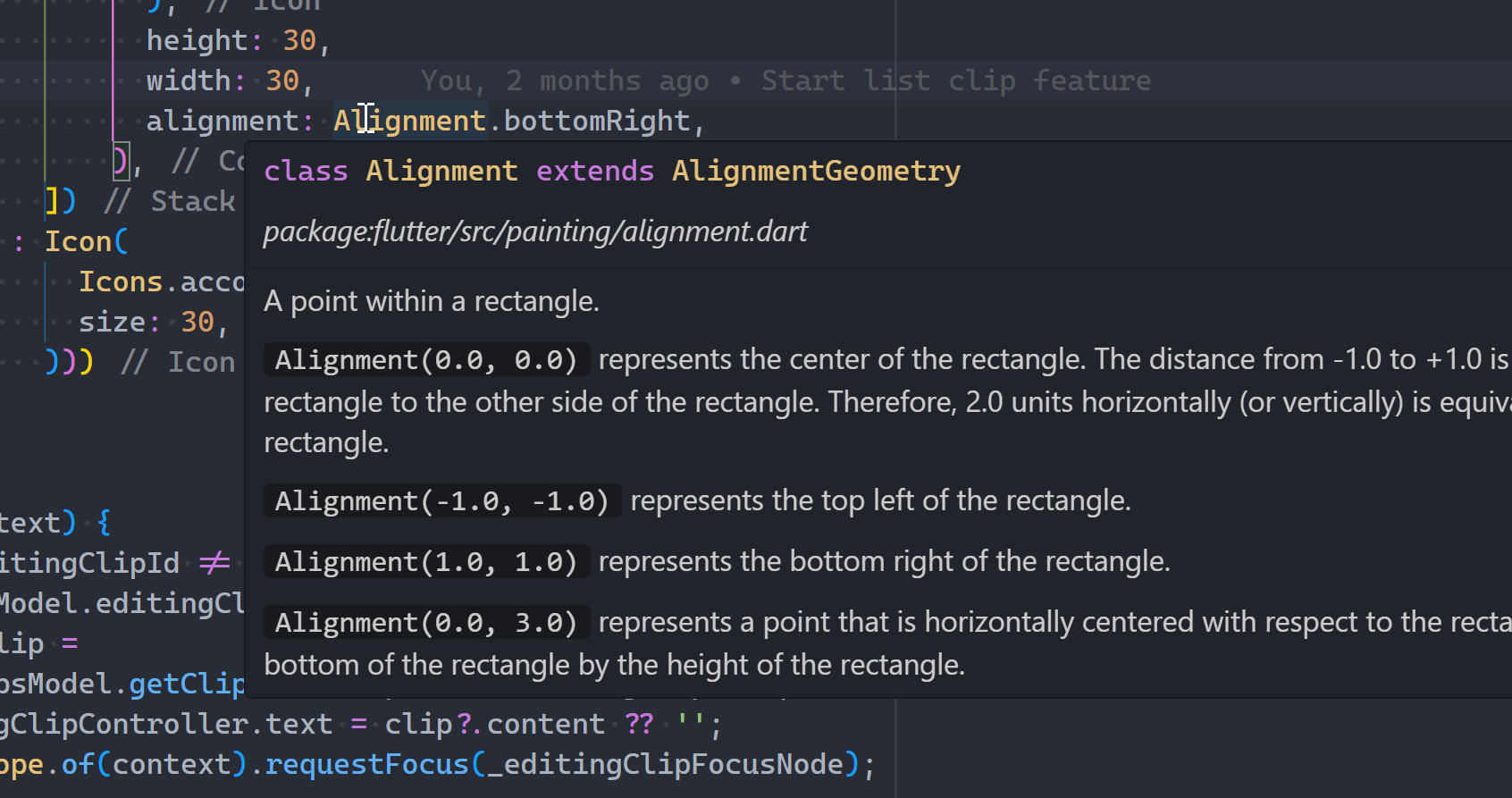

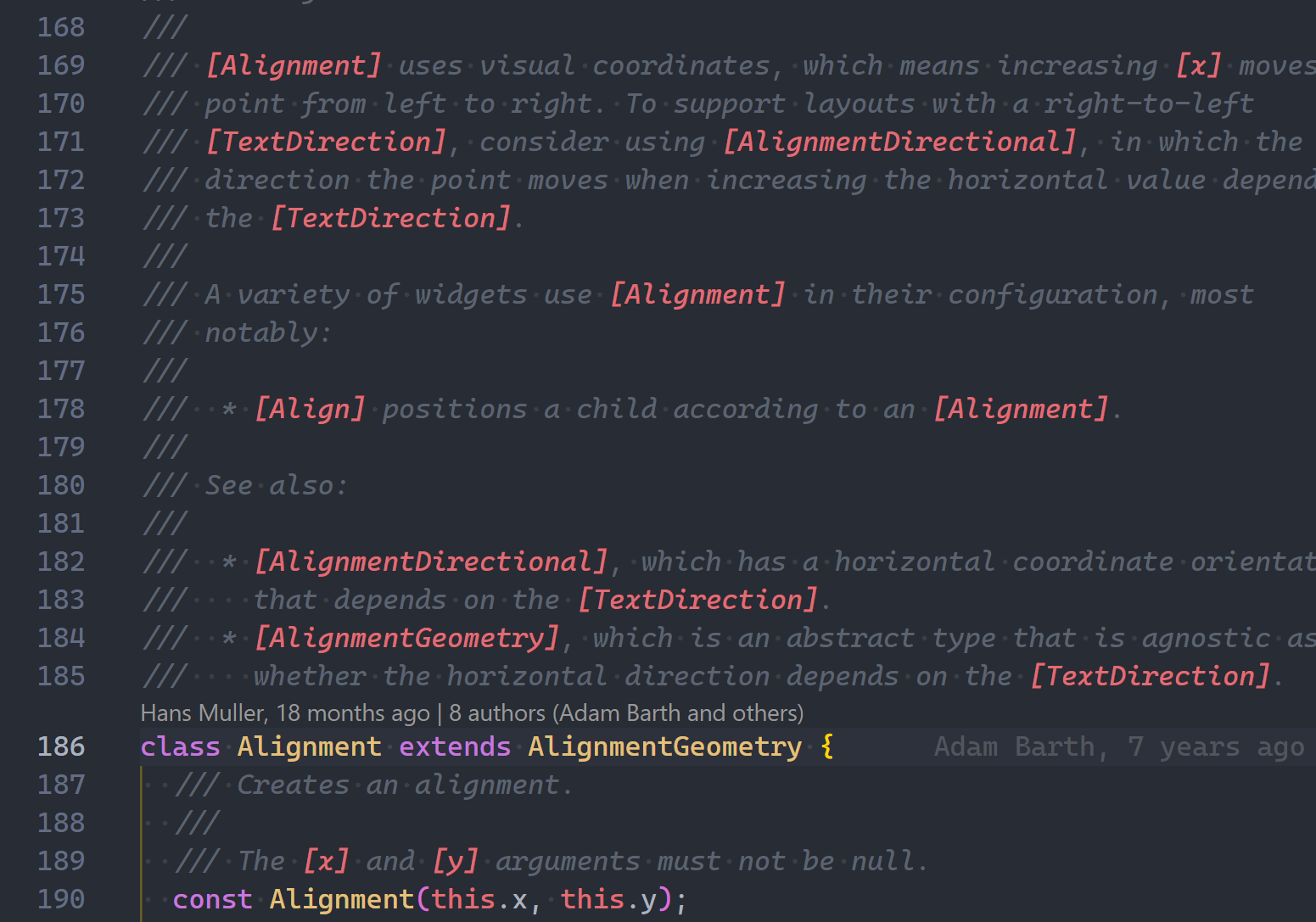

One reason people love Flutter is the amazingly extensive documentation — both online and offline:

These all came from the massive amount of comments in the codebase:

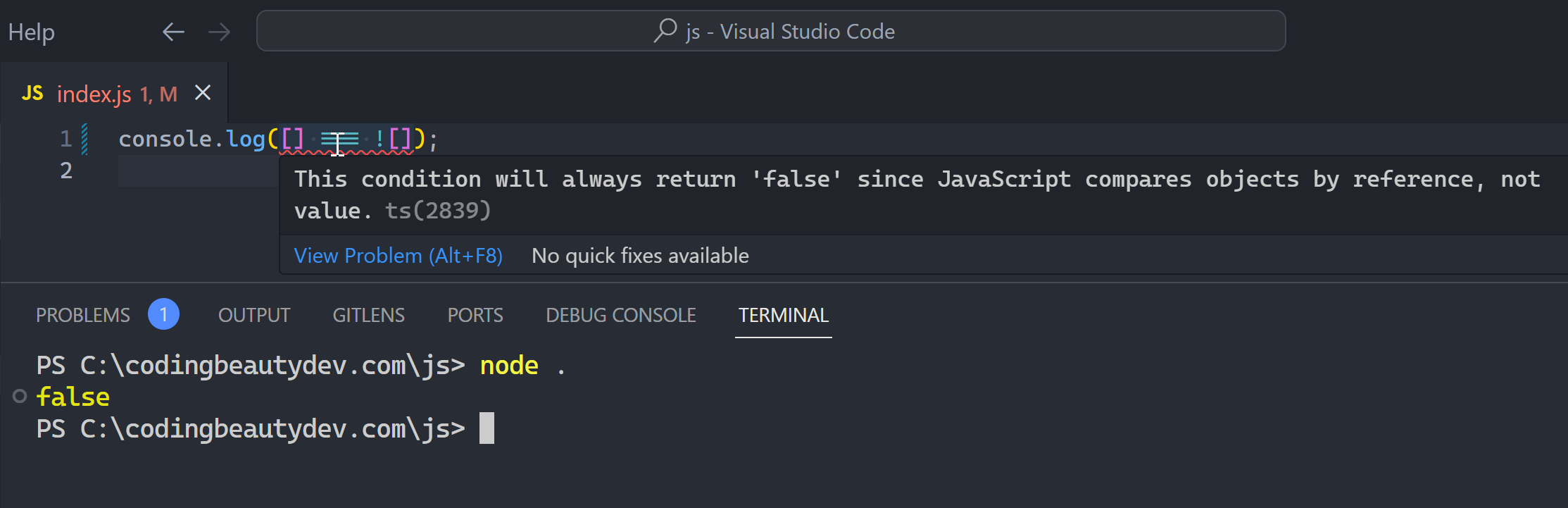

3. Why did I do this?

Sometimes you need to explain why code is a certain way from a bigger-picture perspective.

Like a warning:

// Keep it at 10 or else the server will crash in v18.6.0

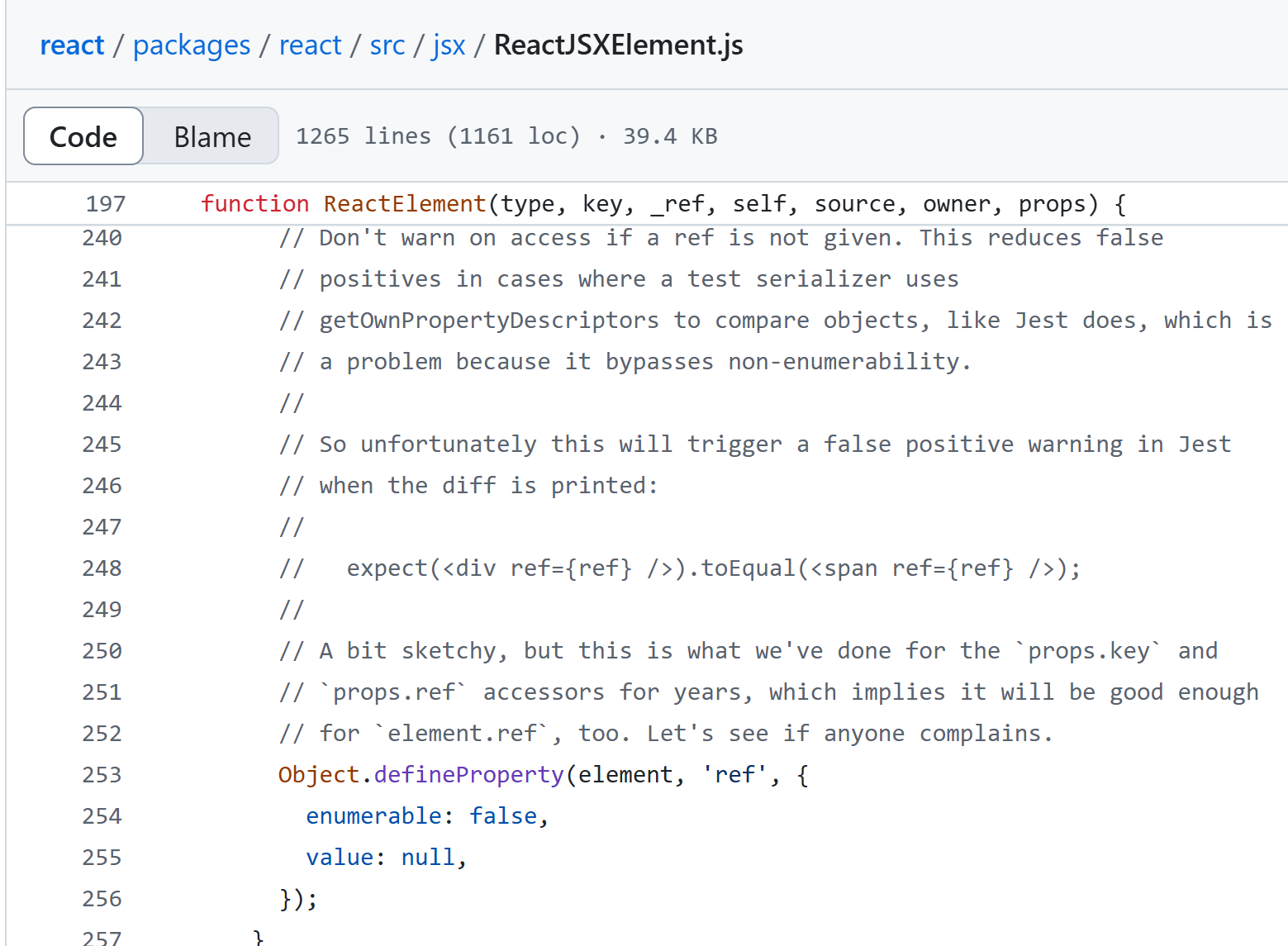

const param = 10;Or check out this from React’s source code:

Yeah it’s pretty damn tough to put that gigantic explanation into code form. Here comments do make sense.

Final thoughts

Always look for ways to show intent directly in code before pulling out those forward slashes.

Comments give you something else to think about and in most cases you actually need a refactoring.

Let code lead.