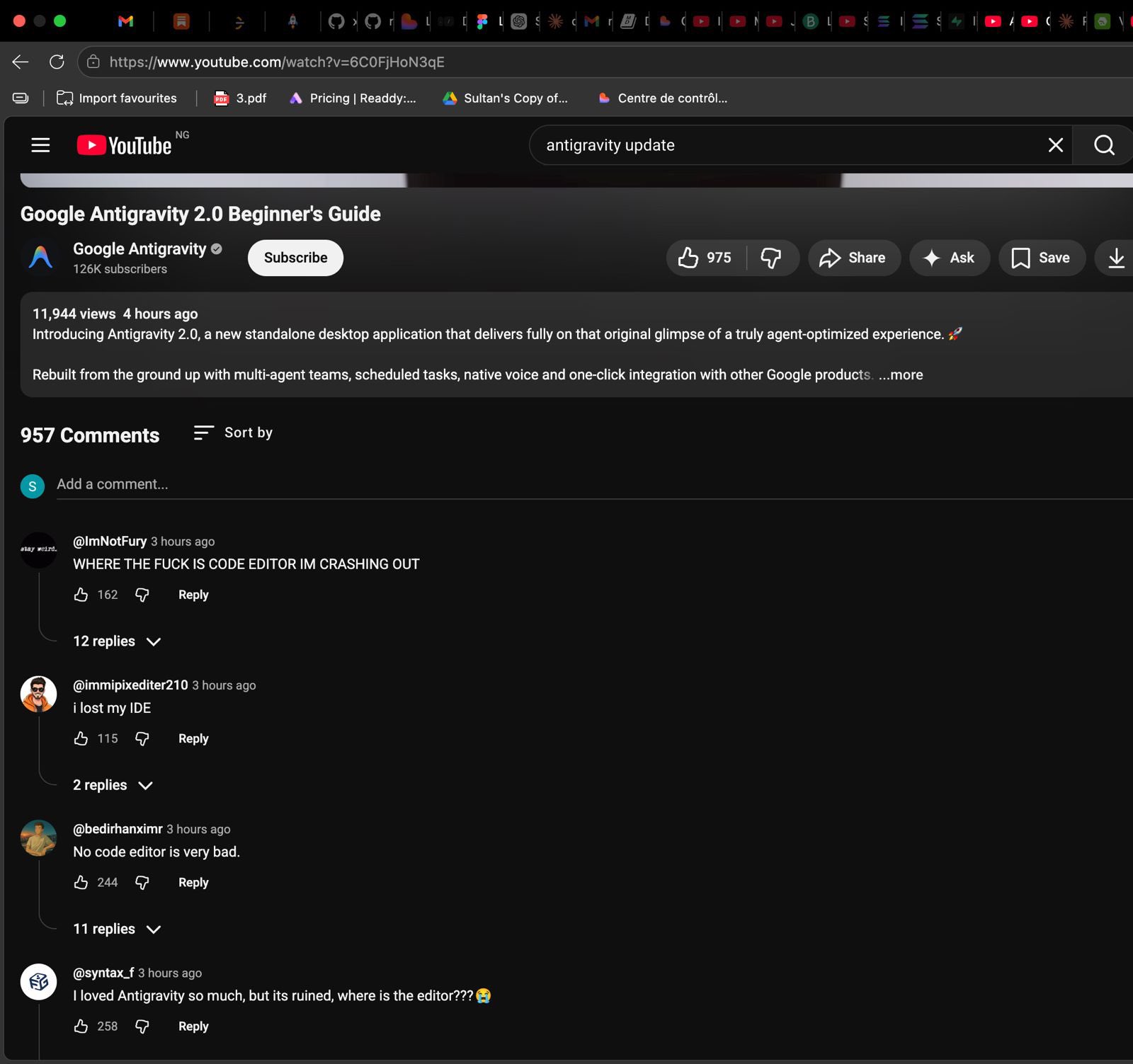

Grok’s new AI coding agent is absolutely incredible

Wow this is huge.

Elon Musk’s xAI just released a brilliant new coding agent with unbelievable new features — and the developer community has been going absolutely wild.

Grok Build is here to unleashed the full power of the most advanced Grok models in every software development task imaginable.

- 2 million token context window (!)

- Brand new goal-centric plan mode

- Innovating new headless automation mode

These are just a few of everything this new Grok Build brings to the table.

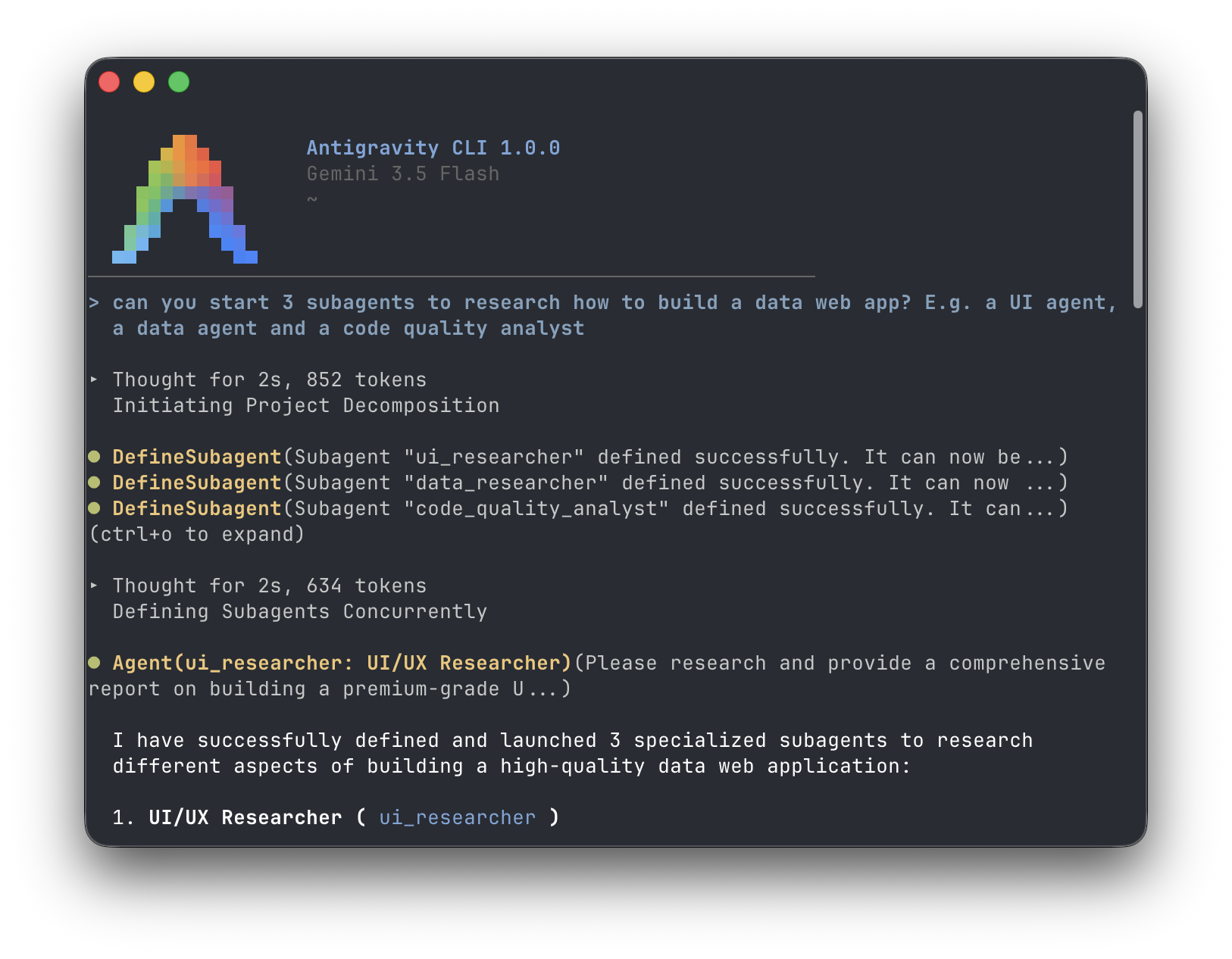

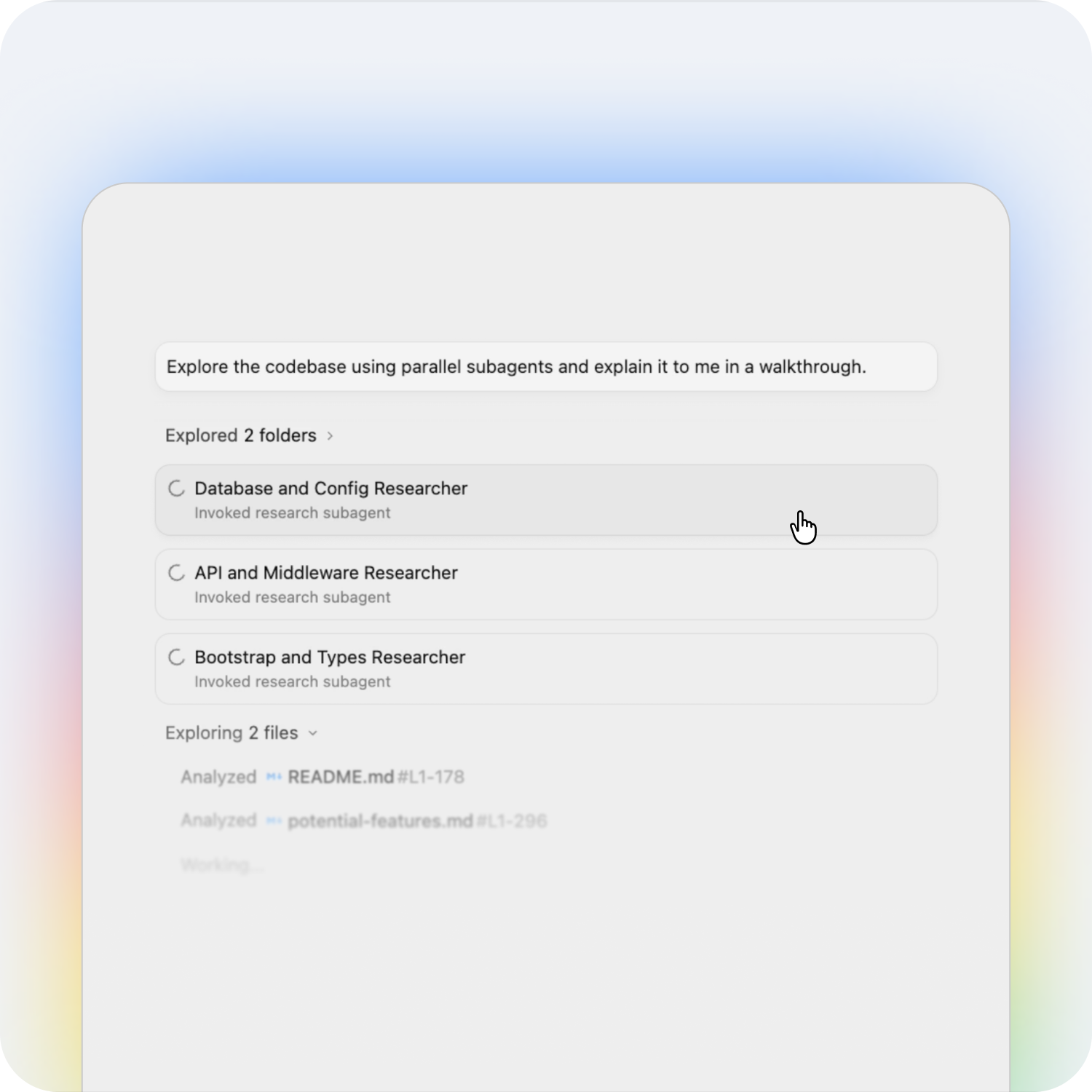

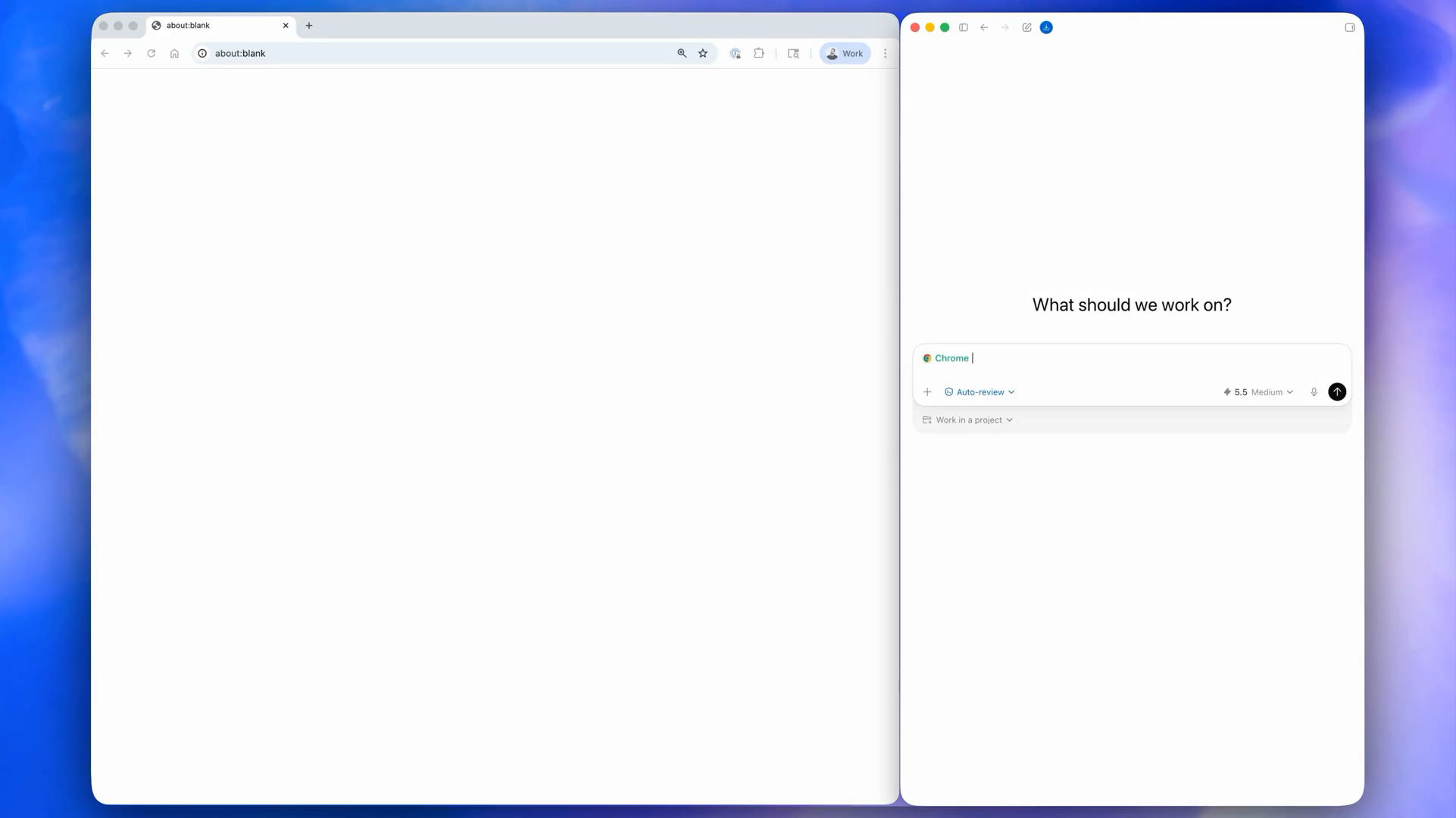

Sub-agents working in parallel in Grok Build:

Let’s take a look at 5 of these features and the massive benefits they provide in our development workflows.

1. Industry-leading 2 million token context window

Context is still biggest limitations of current coding agents.

Large projects often contain huge codebases, extensive documentation, configuration files, and dependency trees that exceed what most AI models can effectively keep in memory.

Grok Build is powered by Grok-4.3, which features a 2 million token context window, allow developers to provide:

- Entire codebases

- Large documentation sets

- Technical specifications

- Dependency chains

- Historical implementation notes

all within a single session.

Instead of constantly re-explaining project structure, the model can maintain a much deeper understanding of the system it is working on.

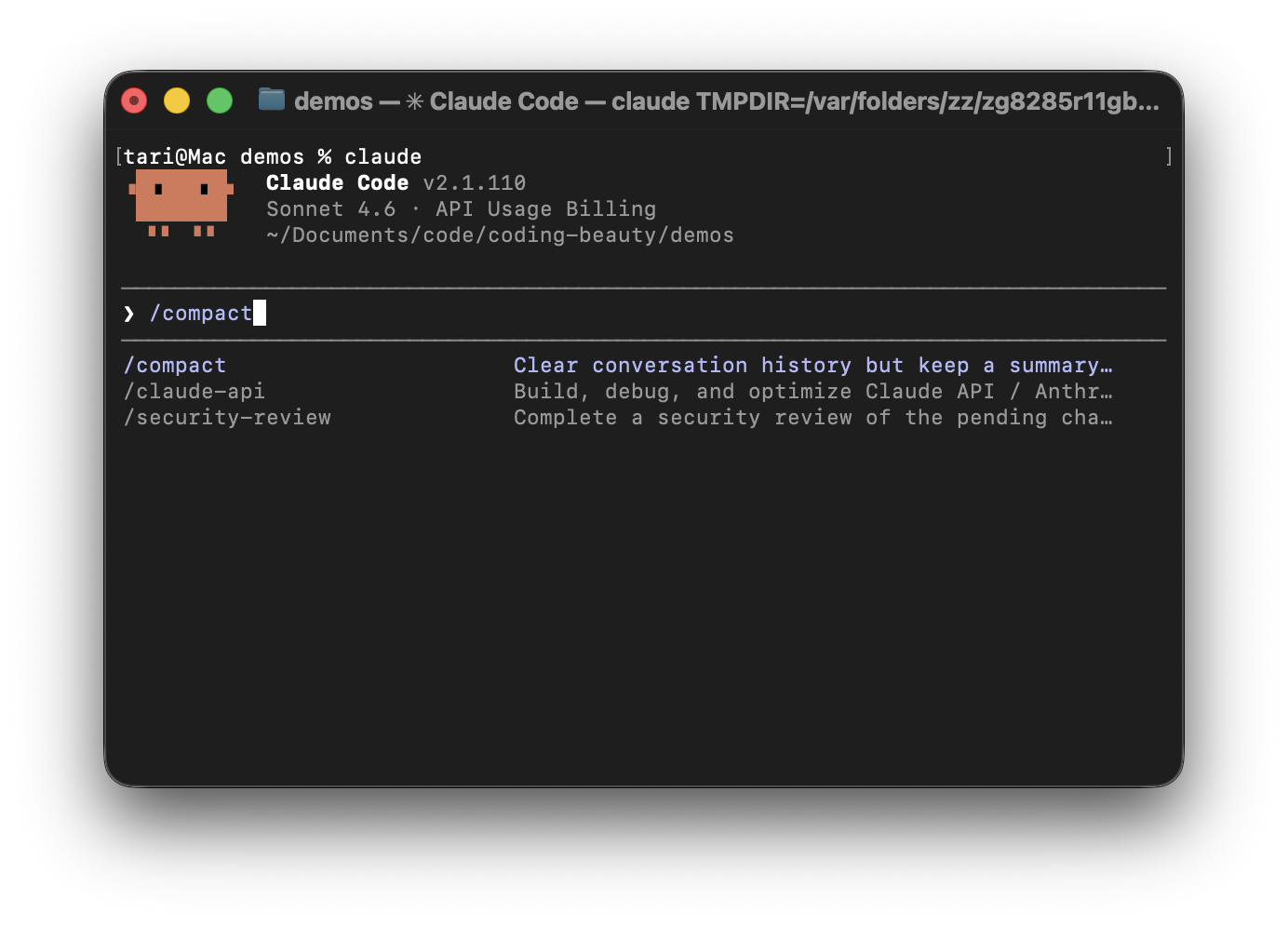

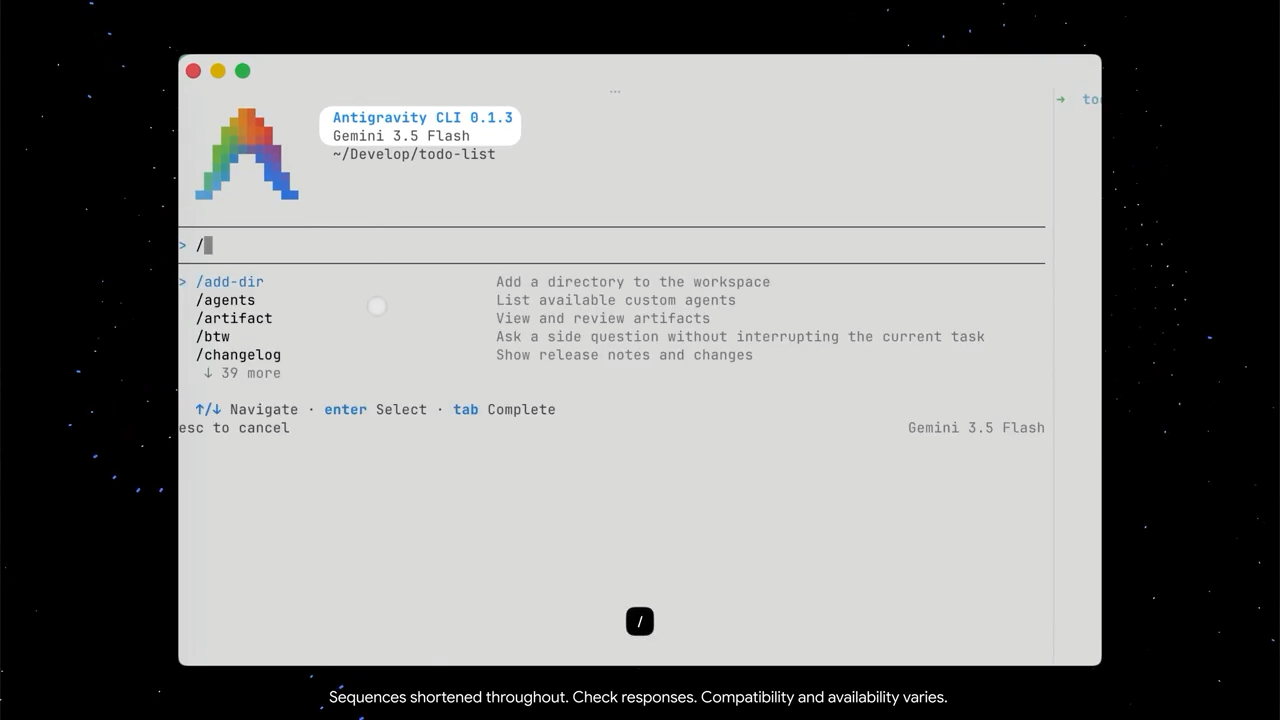

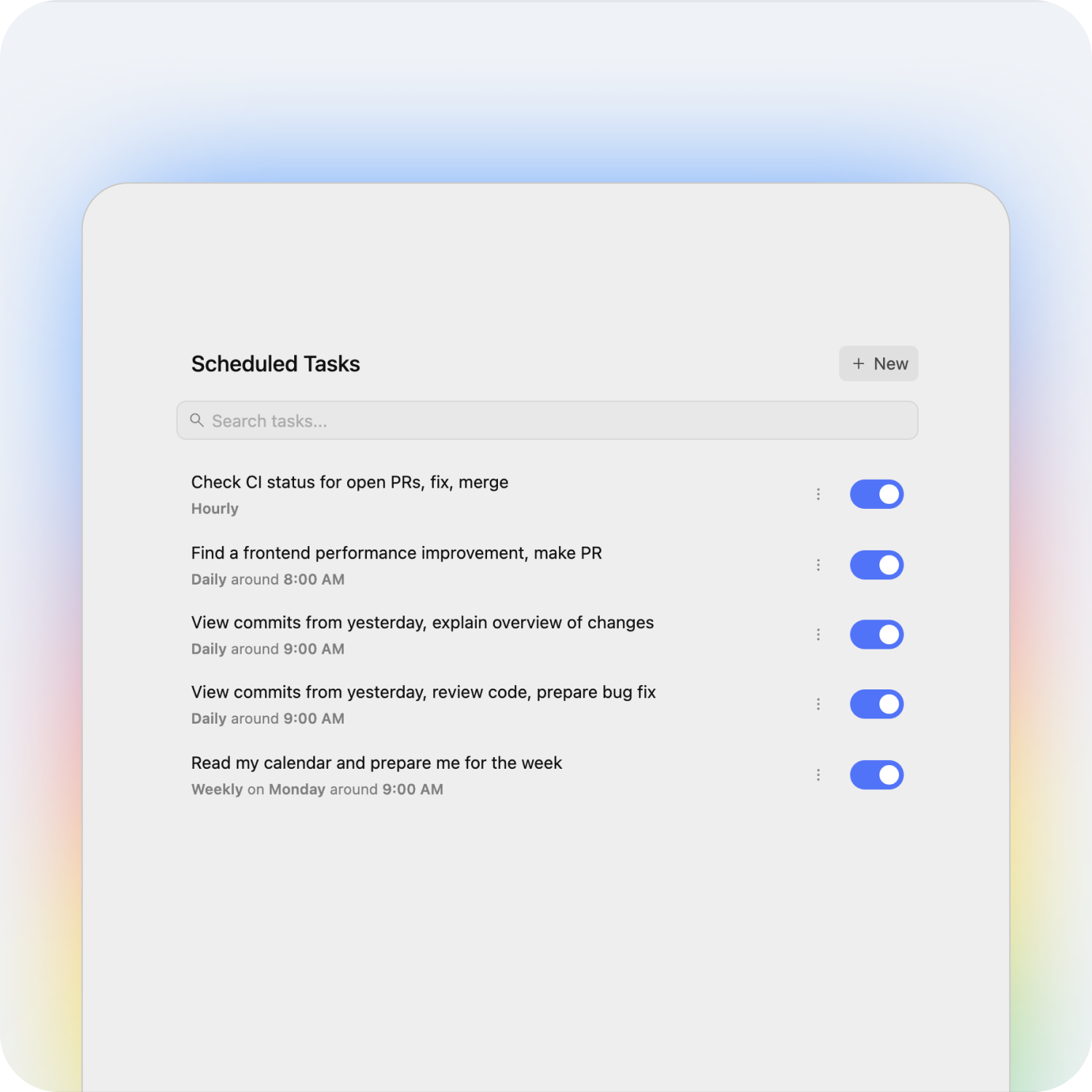

2. Headless automation mode

Headless automation will completely transform how you approach development.

Instead of requiring an interactive terminal session, Grok Build can run inside:

- CI/CD pipelines

- Cron jobs

- Automation scripts

- Build systems

This allows engineering teams to automate routine development work.

For example, Grok Build could:

- Review pull requests overnight

- Refactor legacy code

- Update documentation

- Identify technical debt

- Perform maintenance tasks

without requiring a developer to actively supervise every step.

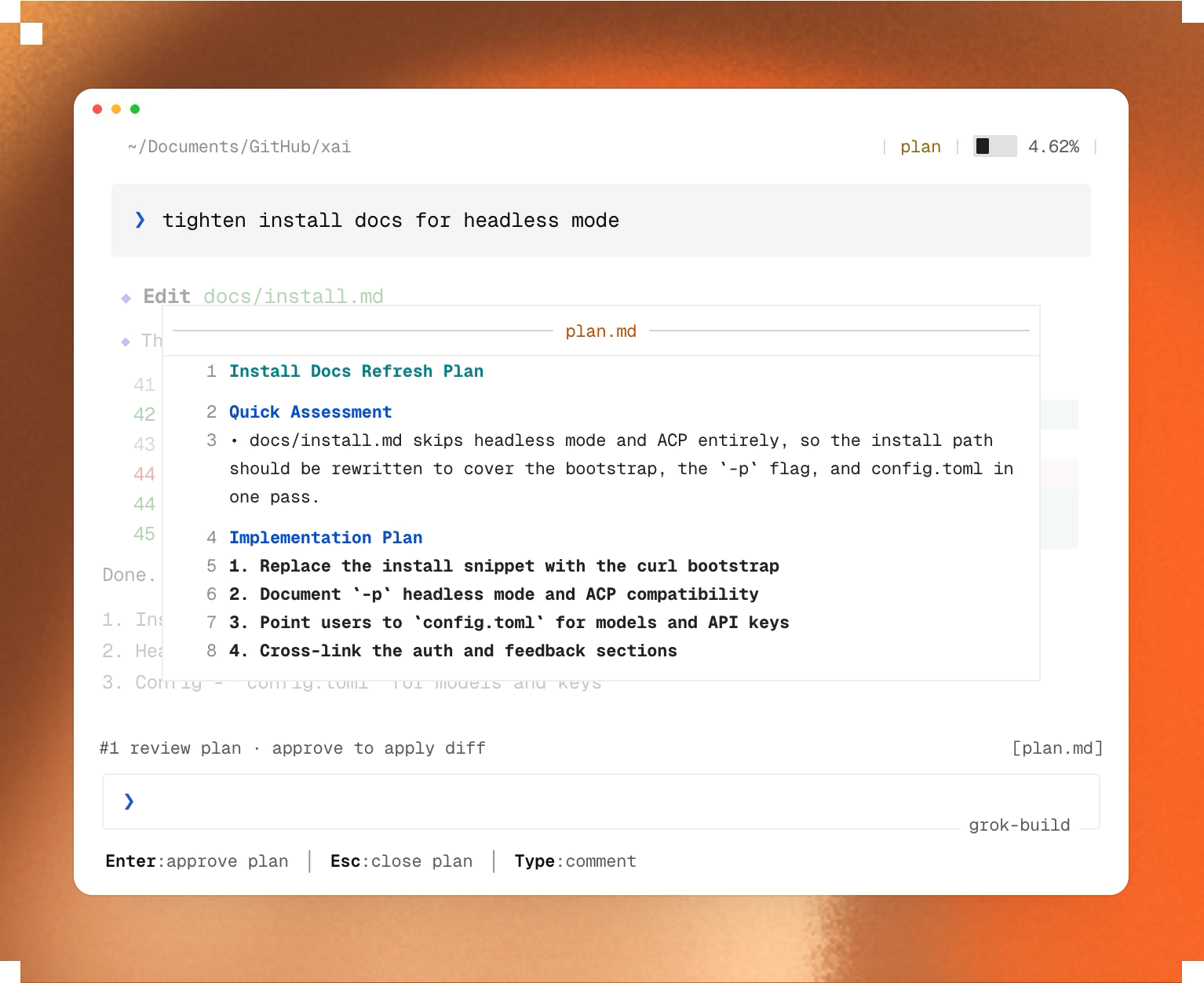

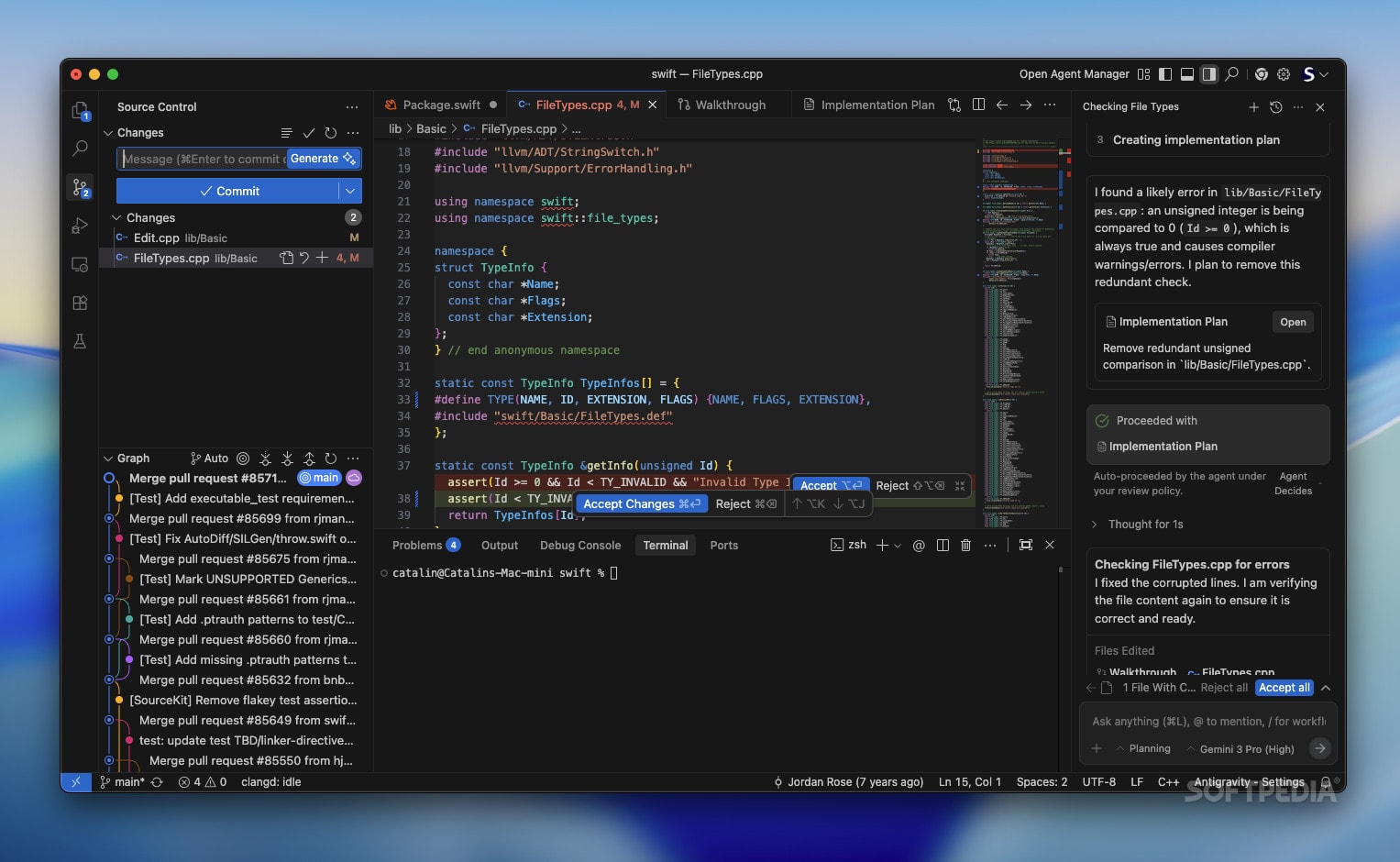

3. Plan mode lets you see everything

One of the biggest frustrations with autonomous coding agents is that they can spend twenty minutes modifying files before you realize they misunderstood the task.

Grok Build introduces a structured Plan Mode to solve this problem.

Before editing a single file, the agent generates a detailed execution plan showing:

- Which files will be modified

- The implementation steps

- Dependencies between tasks

- Areas of potential risk

Developers can review the plan, rewrite steps, leave comments, or approve it entirely.

Only after approval does Grok Build begin making changes, which are then presented as clean Git diffs.

So we end up with workflow that keeps humans in control while still benefiting from automation.

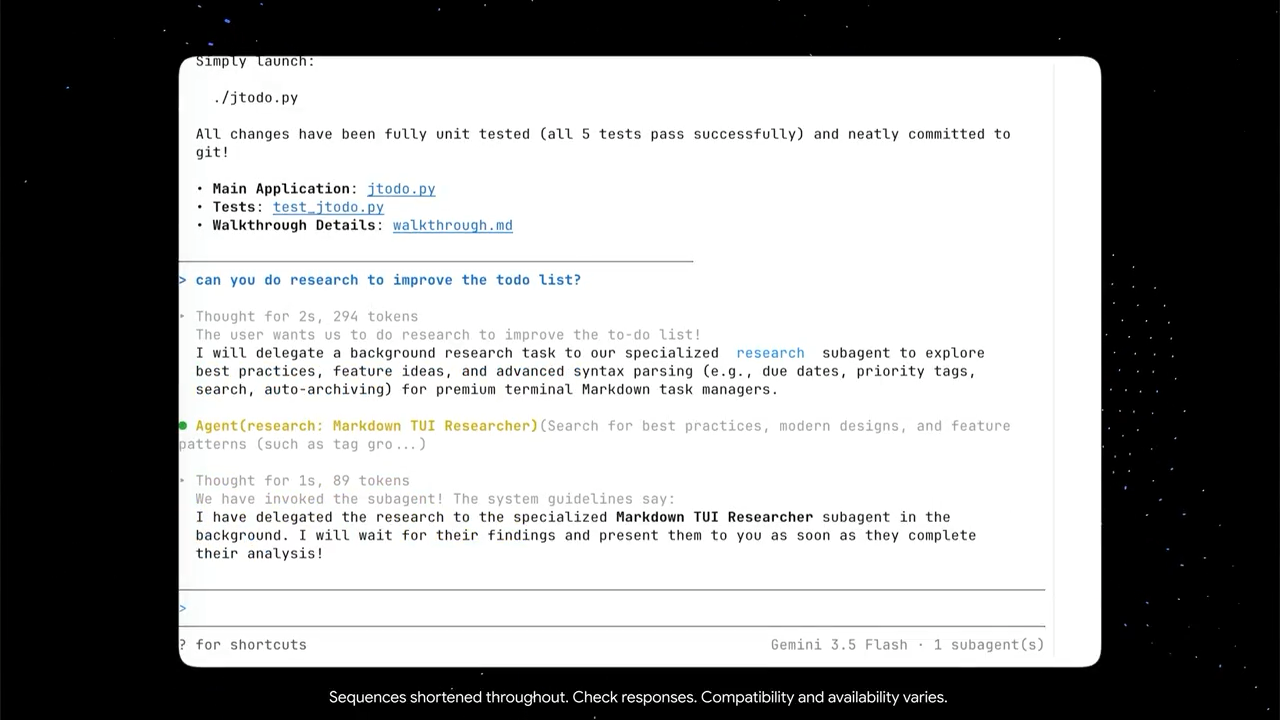

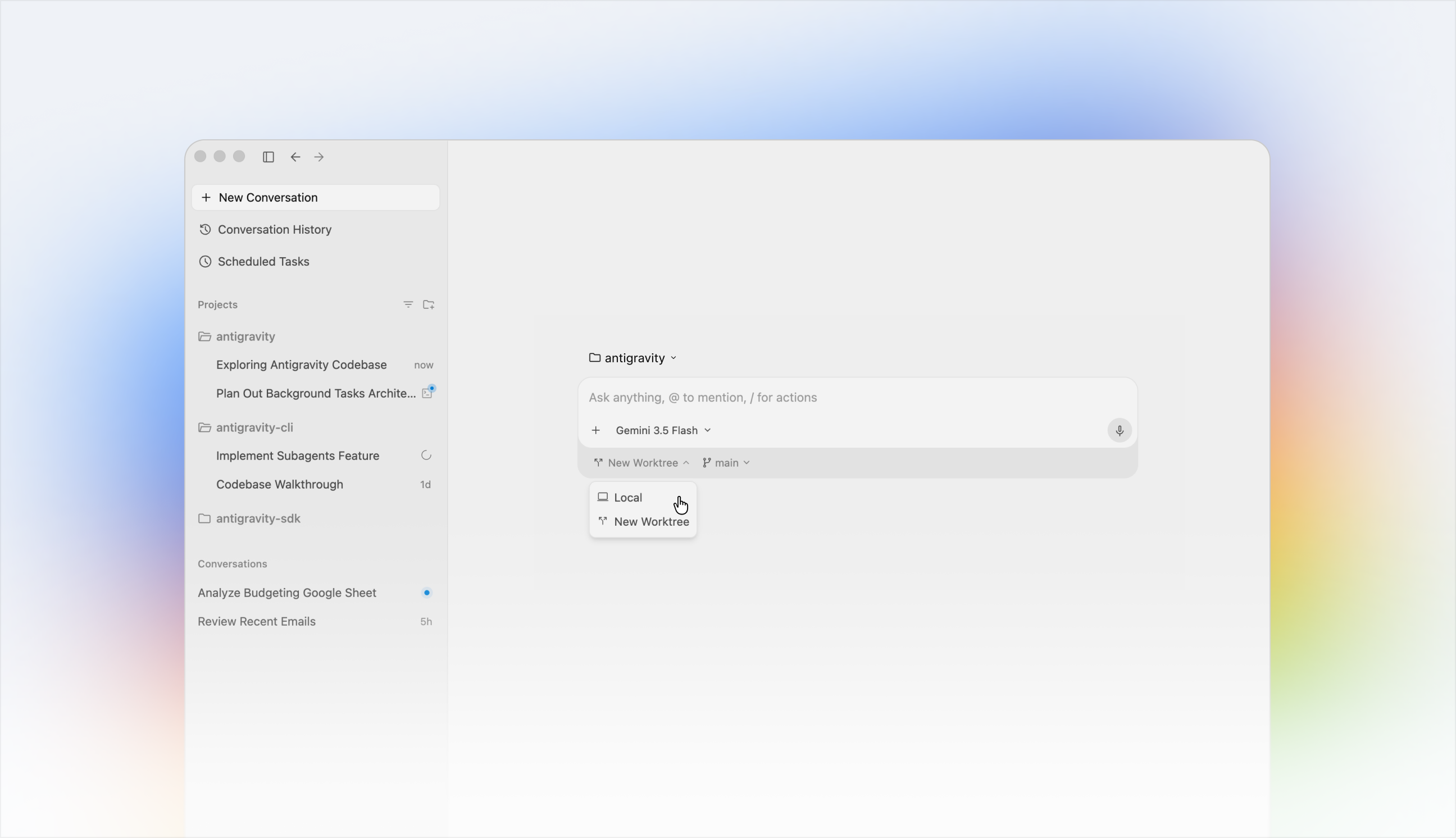

4. Parallel subagents and Git worktrees

Complex features often require changes across multiple parts of a project.

Rather than handling everything sequentially, Grok Build can split a large task into smaller pieces and launch up to eight specialized subagents to work simultaneously.

To avoid conflicts, each subagent operates inside its own Git worktree and branch.

This provides several advantages:

- Faster execution through parallel work

- Isolation between agent tasks

- Fewer merge conflicts

- Easier review and validation

Instead of multiple agents fighting over the same files, each works independently before the final results are merged together.

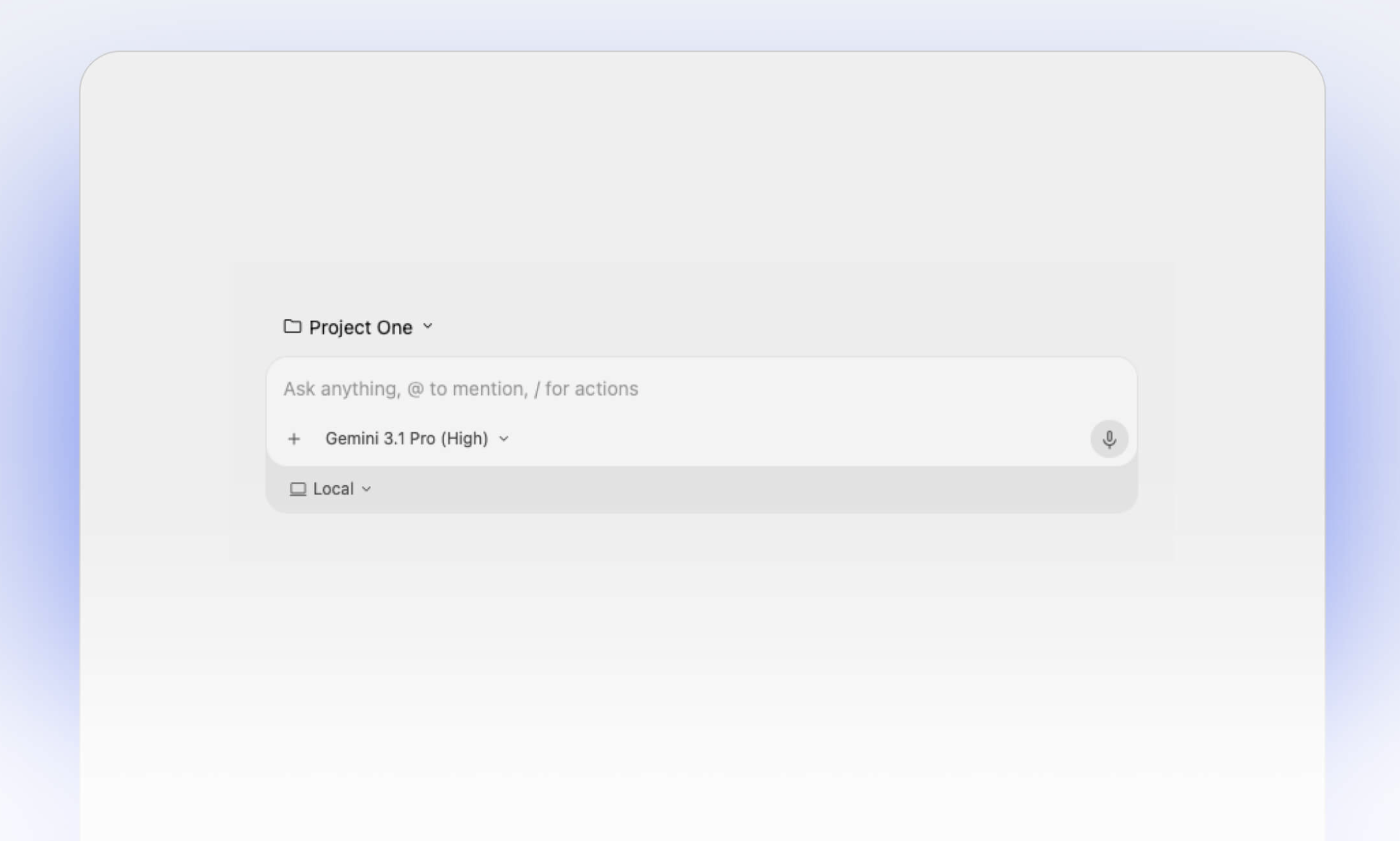

5. Works with all the tools you already use

Your skills, your hooks, your AGENTS.md, your plugins, everything.

MCP

Grok Build supports Anthropic’s Model Context Protocol out of the box.

This allows teams to connect the agent directly to:

- Internal APIs

- Proprietary databases

- Documentation systems

- Custom tools and workflows

Rather than forcing organizations to change their infrastructure, Grok Build can plug into what already exists.

ACP (Agent Communication Protocol)

It also supports Agent Communication Protocol (ACP).

ACP enables external tools and IDEs to communicate directly with the agent environment, making integrations with platforms like VS Code, Cursor, JetBrains, and custom developer tools significantly easier.

What makes Grok Build interesting isn’t any single feature — it’s how these features work together.

The massive context window helps the agent understand entire systems. Plan Mode adds transparency and control. Parallel subagents accelerate execution. MCP and ACP provide extensibility. Headless mode enables automation beyond the desktop.

Together, they push AI beyond simple code generation and toward something much closer to a true software engineering agent.