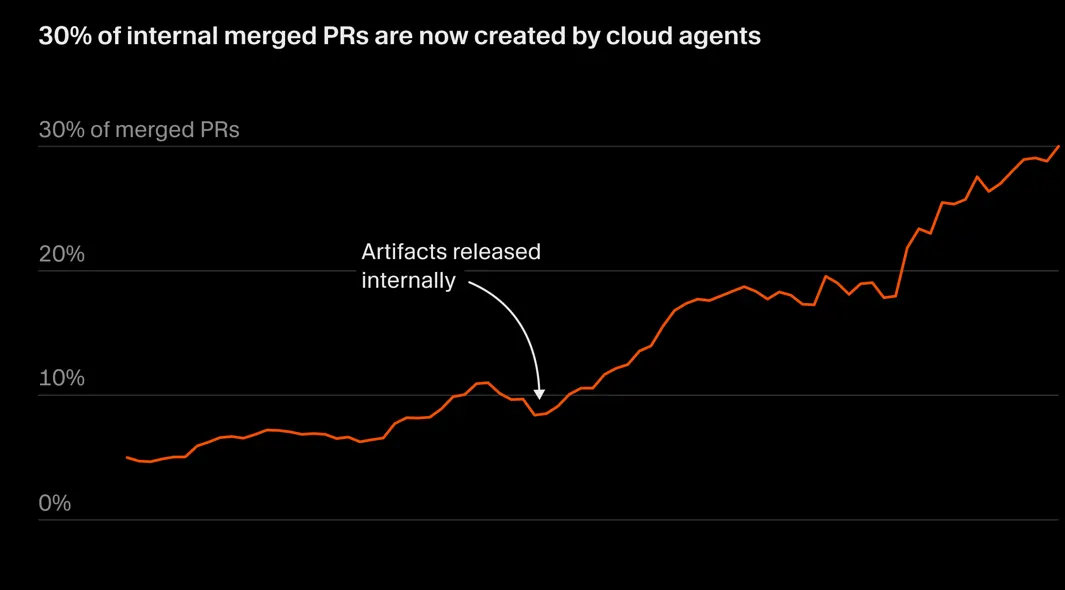

30% of internal merged PRs at Cursor are now created by Cloud agents.

We’ve had autocomplete. We’ve had chat-based coding assistants. We’ve had agents that can open a repo and make a pull request.

This is something different entirely — this is the next generation of AI-assisted coding.

The agents are writing themselves

These agents don’t just suggest code, but take the wheel, build features, open PRs, and ship to production on their own. It’s virtual computer control.

We are no longer talking about the AI agents writing code faster or with greater accuracy.

We are now firmly in the era of the self-driving codebase.

The Cursor team asked an agent to add GitHub source links to each component on their Marketplace plugin pages.

The agent implemented the feature end-to-end — then it recorded itself clicking each component to verify the links worked correctly.

We’ve already seen major strides being made to ascend AI agents to a higher level of autonomy beyond just modifying the codebase according to prompts.

We saw this with Previews from Claude Code — with Claude Code now comprehensively testing your app and fixing any detected runtime bugs in realtime.

Now we are seeing this with Cursor agents being now being able to control their own computers — not just their codebase anymore.

We are in the age of handing AI full computer control, letting it run in parallel, validate its own work, and hand you something that’s ready to merge — complete with demos and high-level descriptions of everything it did.

This isn’t just a genius senior developer anymore.

This is entire freaking development team. And you just became the executive.

Full computer control

Most AI coding tools live inside text. They edit files, maybe run a command, maybe see the output. But they don’t really use the software they’re building.

Cursor’s newer cloud agents change that. They run inside isolated virtual machines. They can open the browser. Click through flows. Start servers. Inspect logs. Take screenshots. Record videos. In other words, they don’t just write the feature — they experience it.

That’s a big deal.

Because once an agent can use the product, it stops being just an intelligent assistant stuck inside the codebase — and starts behaving more like an engineer. It can try something, see what breaks, fix it, and repeat. The ceiling gets much higher when the AI isn’t blind to the environment.

Parallelization as a first principle

Instead of one agent slowly working through a task, Cursor experiments with fleets of them. Hundreds, in some cases. But throwing more agents at a problem doesn’t magically make things better. Without structure, they step on each other, block on shared resources, or get stuck playing it safe.

So the system borrows from organizational design. A top-level planner owns the big goal. Sub-planners break that goal into chunks. Workers execute in isolation. Planning and execution both happen in parallel.

Software development stops looking like a solo craft and starts looking like systems management.

Self-validation and merge-ready output

Here’s the part that really changes the workflow: the output isn’t just code.

The agent runs the tests. If there aren’t tests, it can add them. It clicks through the UI to verify behavior. It resolves merge conflicts. It rebases. It checks logs.

And then it attaches artifacts.

Videos of the feature working. Screenshots of edge cases. Structured summaries explaining what changed and why. Logs showing that the server booted cleanly.

This matters because trust is the real bottleneck in AI-assisted development. A diff alone doesn’t tell you whether something works. Proof does.

When an agent hands you a pull request with evidence attached, your role shifts from “figure out what happened” to “decide whether this meets the bar.”

That’s a different posture.

Artifacts as proof of work

The artifacts aren’t fluff. They’re the connective tissue between autonomous execution and human judgment.

Think of them as receipts.

They reduce ambiguity. They shorten review cycles. They make it easier to delegate bigger chunks of work without losing visibility.

Instead of asking, “Did it actually work?” you can just watch it work.

Over time, that changes how much responsibility you’re willing to hand off.

The developer’s new job

All of this leads to the biggest shift: your role moves up a level.

If agents can execute, validate, and document, your leverage isn’t in typing. It’s in direction.

You define the goal. Clarify constraints. Shape the plan. Review outcomes. Decide what ships.

You spend less time authoring every line and more time navigating complexity. You become the orchestrator rather than the instrument.

This doesn’t make developers obsolete. It makes judgment more valuable. Taste. Prioritization. Architecture. Product sense.

The work doesn’t disappear. It changes altitude.

So is it really “self-driving”?

Not fully (yet??)

Humans are still in the loop. They set intent and make the final call.

But the trajectory is clear. When software can control its environment, split work across many workers, validate its own results, and return merge-ready output with proof attached, it starts to resemble autonomy.

The self-driving codebase isn’t about replacing developers. It’s about amplifying them — and shifting the craft from line-by-line construction to high-level steering.

And once you’ve experienced that shift, it’s hard to go back.